Admittedly I don't get it. Say you have a memory with a memory word of length of 1 byte. Why can't you access a 4 byte long variable in a single memory access on an unaligned address(i.e. not divisible by 4), as it's the case with aligned addresses?

-

23After doing some **additional** Googling I found [this](http://www.ibm.com/developerworks/library/pa-dalign/) great link, that explains the problem really well. – Daar Dec 19 '08 at 15:31

-

1Check out this small article for people who start learning this: http://blog.virtualmethodstudio.com/2017/03/memory-alignment-run-fools/ – Darkgaze Dec 11 '17 at 13:31

-

10@ark link broken – John Jiang Mar 22 '20 at 05:00

-

10@JohnJiang I think I found the new link here: https://developer.ibm.com/technologies/systems/articles/pa-dalign/ – ejohnso49 Apr 17 '20 at 03:56

-

5As of 2022 the link provided by @ark seems to have moved again. Therefore here's a snapshot of the original page linked back in 2008: [web.archive: Data alignment - Straighten up and fly right](https://web.archive.org/web/20080607055623/http://www.ibm.com/developerworks/library/pa-dalign/) – Frederik Hoeft Jun 08 '22 at 12:08

-

1Updated link for 2023: https://developer.ibm.com/articles/pa-dalign/ – Dennis Jan 20 '23 at 10:03

8 Answers

The memory subsystem on a modern processor is restricted to accessing memory at the granularity and alignment of its word size; this is the case for a number of reasons.

Speed

Modern processors have multiple levels of cache memory that data must be pulled through; supporting single-byte reads would make the memory subsystem throughput tightly bound to the execution unit throughput (aka cpu-bound); this is all reminiscent of how PIO mode was surpassed by DMA for many of the same reasons in hard drives.

The CPU always reads at its word size (4 bytes on a 32-bit processor), so when you do an unaligned address access — on a processor that supports it — the processor is going to read multiple words. The CPU will read each word of memory that your requested address straddles. This causes an amplification of up to 2X the number of memory transactions required to access the requested data.

Because of this, it can very easily be slower to read two bytes than four. For example, say you have a struct in memory that looks like this:

struct mystruct {

char c; // one byte

int i; // four bytes

short s; // two bytes

}

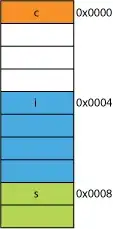

On a 32-bit processor it would most likely be aligned like shown here:

The processor can read each of these members in one transaction.

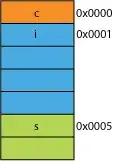

Say you had a packed version of the struct, maybe from the network where it was packed for transmission efficiency; it might look something like this:

Reading the first byte is going to be the same.

When you ask the processor to give you 16 bits from 0x0005 it will have to read a word from 0x0004 and shift left 1 byte to place it in a 16-bit register; some extra work, but most can handle that in one cycle.

When you ask for 32 bits from 0x0001 you'll get a 2X amplification. The processor will read from 0x0000 into the result register and shift left 1 byte, then read again from 0x0004 into a temporary register, shift right 3 bytes, then OR it with the result register.

Range

For any given address space, if the architecture can assume that the 2 LSBs are always 0 (e.g., 32-bit machines) then it can access 4 times more memory (the 2 saved bits can represent 4 distinct states), or the same amount of memory with 2 bits for something like flags. Taking the 2 LSBs off of an address would give you a 4-byte alignment; also referred to as a stride of 4 bytes. Each time an address is incremented it is effectively incrementing bit 2, not bit 0, i.e., the last 2 bits will always continue to be 00.

This can even affect the physical design of the system. If the address bus needs 2 fewer bits, there can be 2 fewer pins on the CPU, and 2 fewer traces on the circuit board.

Atomicity

The CPU can operate on an aligned word of memory atomically, meaning that no other instruction can interrupt that operation. This is critical to the correct operation of many lock-free data structures and other concurrency paradigms.

Conclusion

The memory system of a processor is quite a bit more complex and involved than described here; a discussion on how an x86 processor actually addresses memory can help (many processors work similarly).

There are many more benefits to adhering to memory alignment that you can read at this IBM article.

A computer's primary use is to transform data. Modern memory architectures and technologies have been optimized over decades to facilitate getting more data, in, out, and between more and faster execution units–in a highly reliable way.

Bonus: Caches

Another alignment-for-performance that I alluded to previously is alignment on cache lines which are (for example, on some CPUs) 64B.

For more info on how much performance can be gained by leveraging caches, take a look at Gallery of Processor Cache Effects; from this question on cache-line sizes

Understanding of cache lines can be important for certain types of program optimizations. For example, the alignment of data may determine whether an operation touches one or two cache lines. As we saw in the example above, this can easily mean that in the misaligned case, the operation will be twice slower.

- 41,167

- 16

- 88

- 103

-

the following structs x y z have different sizes, because of the rule of each member has to begin with the address which is multiples of its size and the strcut has to be ended with address which is multiples of the largest size the struct's member. struct x { short s; //2 bytes and 2 padding tytes int i; //4 bytes char c; //1 bytes and 3 padding bytes long long l; }; struct y { int i; //4 bytes char c ; //1 bytes and 1 padding byte short s; //2 bytes }; struct z { int i; //4 bytes short s; // 2 bytes char c; //1 bytes and 1 padding byte }; – Gavin May 04 '14 at 04:39

-

This is also a good link, based on a chapter in the book "Game Engine Programming" by Jason Gregory: http://hjistcgam475.blogspot.se/2013/02/object-layout-in-memory-rules-put.html – AzP May 09 '14 at 13:17

-

1If I understand correctly, the reason WHY a computer cannot read an unaligned word in one step is because the addesses use 30 bits and not 32 bits?? – GetFree Jun 16 '14 at 22:50

-

Minor note: "The CPU ALWAYS reads at it's word size": Not with the old [8088](http://en.wikipedia.org/wiki/Intel_8088) – chux - Reinstate Monica Jun 20 '14 at 03:00

-

@GetFree No. Like many things in life there are compromises, pros and cons. Limiting the number of address lines is more kismet than _the_ reason that modern architectures don't do unaligned access. If the processor is never going to access unaligned memory, then why include the physical traces on the board and incur the costs in design, testing, debugging, and manufacturing? – joshperry Jun 22 '14 at 17:29

-

1@chux Yes it's true, absolutes never hold. The 8088 is an interesting study of the tradeoffs between speed and cost, it was basically a 16-bit 8086 (which had a full 16-bit external bus) but with only half the bus-lines to save production costs. Because of this the 8088 needed twice the clock cycles to access memory than the 8086 since it had to do two reads to get the full 16-bit word. The interesting part, the 8086 can do a _word aligned_ 16-bit read in a single cycle, unaligned reads take 2. The fact that the 8088 had a half-word bus masked this slowdown. – joshperry Jun 22 '14 at 17:40

-

@joshperry In [this question](http://stackoverflow.com/questions/24228252/) I ask what's the actual reason why it's not possible, but no one's given a compelling answer. – GetFree Jun 22 '14 at 21:16

-

2@joshperry: Slight correction: the 8086 can do a word-aligned 16-bit read in *four* cycles, while unaligned reads take *eight*. Because of the slow memory interface, execution time on 8088-based machines is usually dominated by instruction fetches. An instruction like "MOV AX,BX" is nominally one cycle faster than "XCHG AX,BX", but unless it is preceded or followed by an instruction whose execution takes more than four cycles per code byte, it will take four cycles longer to execute. On the 8086, code fetching can sometimes keep up with execution, but on the 8088 unless one uses... – supercat Mar 01 '15 at 03:19

-

So does this only affect reading from the disk, or does it affect in-memory objects too? how much will a bit reader that reads and caches a block 8 bytes in size, on a 64 bit computer? – MarcusJ Jun 19 '15 at 12:39

-

2I think the alignment of `mystruct` is wrong. C structs are always aligned to the alignment of its largest member, thus there should be two additional padding bytes after `s`. – Martin Dec 16 '15 at 19:51

-

1Very true, @martin. I elided those padding bytes to focus the discussion intra-struct, but perhaps it would be better to include them. – joshperry Dec 16 '15 at 20:04

-

"`The CPU can operate on an aligned word of memory atomically`", how to understand this sentence? IMO, operation on memory would not always be atomic, like `++i`, the procedure might be: 1. read value of `i` into register 2. increment register 3. store register value into `i` – cifer Apr 04 '16 at 08:11

-

@cli__: Many CPUs have special instructions for interlocked increment, decrement, and swaps (among other atomic operations) that even a bad compiler will use in many cases such as this, and--barring memory fences--the CPU itself is free to reorder instructions to execute most performantly. Modern CPUs are incredibly complex, especially when it comes to how caching, the flow of data to and from main memory and the parallelization of the cores of a modern processor pipeline are concerned. – joshperry Apr 15 '16 at 20:28

-

1

-

1*The CPU always reads at its word size (4 bytes on a 32-bit processor)* - No, that's an over-simplification. Most x86 CPUs have fully efficient unaligned loads as long as they don't cross a cache line boundary. See [How can I accurately benchmark unaligned access speed on x86\_64](https://stackoverflow.com/q/45128763). Also, it's not rare for 32-bit CPUs to access cache 8 bytes at a time. e.g. P5 Pentium and later guarantees atomicity of aligned 8-byte loads and stores. (Possible in 32-bit mode with FP or MMX, or with SSE `movq`) Similarly many 32-bit ARM guarantee load-pair atomicity. – Peter Cordes Aug 19 '20 at 08:46

-

Also, x86 caches support [byte *stores* with full efficiency](https://stackoverflow.com/questions/46721075/can-modern-x86-hardware-not-store-a-single-byte-to-memory). (microarchitectures for many other ISAs do a RMW cycle to commit narrow or misaligned stores to cache, though.) – Peter Cordes Aug 19 '20 at 08:47

-

@PeterCordes Absolutely! Caching and aligned memory dynamics are incredibly interesting and sometimes quite complex. I was trying to keep the discussion of how caches interact with alignment out of my answer to keep it succinct, but your comments are well-recieved. – joshperry Oct 09 '20 at 17:18

-

Aren't there some architectures that don't support unaligned access at all? – Oskar Skog Oct 24 '20 at 18:17

-

Regarding the speed gained by not having to make two memory accesses, it seems like this could potentially be offset by larger data being less cache friendly. E.g., maybe data is not aligned to a 64-bit word size (on a 64-bit system), requiring additional memory accesses, but you can fit more of that data in a 64 byte cache line. Seems like there are competing factors here when considering speed due to word alignment. I wonder if there are any numbers out there about how these compete. – PersonWithName Jul 29 '21 at 23:39

-

See https://lemire.me/blog/2012/05/31/data-alignment-for-speed-myth-or-reality/. It seems like alignment can be much less significant on some more recent processors. I'd guess that cache alignment can have a stronger impact than word alignment (but even then, it depends on access patterns, prefetching etc.). Given the results from that link, it raises the question whether compilers should turn word-alignment off for some particular processors or architectures to be more cache friendly. – PersonWithName Jul 29 '21 at 23:41

-

And (correct me if I'm wrong), but instructions on x64 are generally not aligned. It's a variable length instruction set. At most, compilers will align some branch targets to the cache line boundaries to be cache friendly, but the instructions are not word aligned. This probably doesn't matter on x64 since I assume the instruction decoder fetches entire cache lines at a time. (In fact, memory access granularity is arguably at the cache line level and not the word size?) – PersonWithName Jul 29 '21 at 23:43

-

It basically just seems to me that on certain architectures, cache alignment, cache friendliness, etc. is much more important than word alignment... idk. I could easily be wrong on this since I'm not operating off of any data here (except that article I linked earlier). – PersonWithName Jul 29 '21 at 23:46

-

See https://www.agner.org/optimize/blog/read.php?i=142&v=t "On the Sandy Bridge, there is no performance penalty for reading or writing misaligned memory operands, except for the fact that it uses more cache banks so that the risk of cache conflicts is higher when the operand is misaligned. Store-to-load forwarding also works with misaligned operands in most cases." – PersonWithName Jul 29 '21 at 23:54

-

-

1@Kanony Not 100% sure, but If the CPU reads 4 bytes starting from 0x0001, it'll be unalligned, since 0x5 is not a valid memory address ( not divisible by 4 ), so the CPU has to start from 0x0 and then continue reading 4 bytes each. – vmemmap Jun 24 '22 at 08:48

-

For the ***packed version of the struct*** figure, is there a way to explicitly tell the compiler to pack the structure in a compressed format? – Erick Platero Sep 13 '22 at 13:47

-

The link is dead. Will you please provide another link? It looked like an interesting article. http://www.rcollins.org/articles/pmbasics/tspec_a1_doc.html – Joshua Ginn Feb 02 '23 at 14:37

-

For those looking for the IBM article: archive.org has a copy: https://web.archive.org/web/20201021053824/https://developer.ibm.com/technologies/systems/articles/pa-dalign/ – Zyansheep Apr 19 '23 at 21:29

It's a limitation of many underlying processors. It can usually be worked around by doing 4 inefficient single byte fetches rather than one efficient word fetch, but many language specifiers decided it would be easier just to outlaw them and force everything to be aligned.

There is much more information in this link that the OP discovered.

- 179,021

- 58

- 319

- 408

you can with some processors (the nehalem can do this), but previously all memory access was aligned on a 64-bit (or 32-bit) line, because the bus is 64 bits wide, you had to fetch 64 bit at a time, and it was significantly easier to fetch these in aligned 'chunks' of 64 bits.

So, if you wanted to get a single byte, you fetched the 64-bit chunk and then masked off the bits you didn't want. Easy and fast if your byte was at the right end, but if it was in the middle of that 64-bit chunk, you'd have to mask off the unwanted bits and then shift the data over to the right place. Worse, if you wanted a 2 byte variable, but that was split across 2 chunks, then that required double the required memory accesses.

So, as everyone thinks memory is cheap, they just made the compiler align the data on the processor's chunk sizes so your code runs faster and more efficiently at the cost of wasted memory.

- 51,617

- 12

- 104

- 148

-

*"Easy and fast if your byte was at the right end, but if it was in the middle of that 64-bit chunk,..."* Why can't you fetch a 64 bit starting from the location where you byte is located? – Mehdi Charife Nov 27 '22 at 02:15

-

@MehdiCharife because its way easier (and thus cheaper, possibly faster too) to implement the hardware memory controller in bus-sized blocks than in bytes. Access in blocks is better for caching, aligning by byte would complexify and miss the cache a lot more often. – gbjbaanb Nov 27 '22 at 19:40

-

*"Access in blocks is better for caching"* Why can't you access the block starting from the byte you want to read? – Mehdi Charife Nov 27 '22 at 19:49

-

one clarification is fine, but now you're asking questions in the comment section. – gbjbaanb Nov 27 '22 at 19:52

Fundamentally, the reason is because the memory bus has some specific length that is much, much smaller than the memory size.

So, the CPU reads out of the on-chip L1 cache, which is often 32KB these days. But the memory bus that connects the L1 cache to the CPU will have the vastly smaller width of the cache line size. This will be on the order of 128 bits.

So:

262,144 bits - size of memory

128 bits - size of bus

Misaligned accesses will occasionally overlap two cache lines, and this will require an entirely new cache read in order to obtain the data. It might even miss all the way out to the DRAM.

Furthermore, some part of the CPU will have to stand on its head to put together a single object out of these two different cache lines which each have a piece of the data. On one line, it will be in the very high order bits, in the other, the very low order bits.

There will be dedicated hardware fully integrated into the pipeline that handles moving aligned objects onto the necessary bits of the CPU data bus, but such hardware may be lacking for misaligned objects, because it probably makes more sense to use those transistors for speeding up correctly optimized programs.

In any case, the second memory read that is sometimes necessary would slow down the pipeline no matter how much special-purpose hardware was (hypothetically and foolishly) dedicated to patching up misaligned memory operations.

- 143,651

- 25

- 248

- 329

-

1*no matter how much special-purpose hardware was (hypothetically and foolishly) dedicated to patching up misaligned memory operations* - Modern Intel CPUs, please stand up and /wave. :P Fully efficient handling of misaligned 256-bit AVX loads (as long as they don't cross a cache-line boundary) is convenient for software. Even split loads aren't too bad, with Skylake greatly improving the penalty for page-split loads/stores, from ~100 cycles down to ~10. (Which will happen if vectorizing over an unaligned buffer, with a loop that doesn't spend extra startup / cleanup code aligning pointers) – Peter Cordes Aug 19 '20 at 08:53

-

1AVX512 CPUs with 512-bit paths between L1d cache and load/store execution units do suffer significantly more from misaligned pointers because *every* load is misaligned, instead of every other. – Peter Cordes Aug 19 '20 at 08:53

@joshperry has given an excellent answer to this question. In addition to his answer, I have some numbers that show graphically the effects which were described, especially the 2X amplification. Here's a link to a Google spreadsheet showing what the effect of different word alignments look like. In addition here's a link to a Github gist with the code for the test. The test code is adapted from the article written by Jonathan Rentzsch which @joshperry referenced. The tests were run on a Macbook Pro with a quad-core 2.8 GHz Intel Core i7 64-bit processor and 16GB of RAM.

- 1,150

- 12

- 17

-

5

-

2

-

OMG! memcpy function is specially optimized to work with unaligned data! Such tests has no sense! – Kirill Frolov Dec 29 '21 at 15:28

If you have a 32bit data bus, the address bus address lines connected to the memory will start from A2, so only 32bit aligned addresses can be accessed in a single bus cycle.

So if a word spans an address alignment boundary - i.e. A0 for 16/32 bit data or A1 for 32 bit data are not zero, two bus cycles are required to obtain the data.

Some architectures/instruction sets do not support unaligned access and will generate an exception on such attempts, so compiler generated unaligned access code requires not just additional bus cycles, but additional instructions, making it even less efficient.

- 88,407

- 13

- 85

- 165

If a system with byte-addressable memory has a 32-bit-wide memory bus, that means there are effectively four byte-wide memory systems which are all wired to read or write the same address. An aligned 32-bit read will require information stored in the same address in all four memory systems, so all systems can supply data simultaneously. An unaligned 32-bit read would require some memory systems to return data from one address, and some to return data from the next higher address. Although there are some memory systems that are optimized to be able to fulfill such requests (in addition to their address, they effectively have a "plus one" signal which causes them to use an address one higher than specified) such a feature adds considerable cost and complexity to a memory system; most commodity memory systems simply cannot return portions of different 32-bit words at the same time.

- 77,689

- 9

- 166

- 211

On PowerPC you can load an integer from an odd address with no problems.

Sparc and I86 and (I think) Itatnium raise hardware exceptions when you try this.

One 32 bit load vs four 8 bit loads isnt going to make a lot of difference on most modern processors. Whether the data is already in cache or not will have a far greater effect.

- 88,407

- 13

- 85

- 165

- 27,109

- 7

- 50

- 78

-

1On Sparc, this was a "Bus error", hence the chapter "Bus error, Take the train" in Peter Van der Linden's "Expert C Programming: Deep C Secrets" – jjg Apr 01 '20 at 19:35

-

It says [here](https://developer.ibm.com/technologies/systems/articles/pa-dalign/) that the PowerPC can handle 32-bit unaligned data raises a hardware exception for 64-bit data. – Harsh Aug 21 '20 at 10:44