How do I collect all the data from a Node.js stream into a string?

- 6,302

- 3

- 32

- 48

- 2,575

- 3

- 17

- 8

-

You should copy the stream or flag it with (autoClose: false). It is bad practice to pollute the memory. – 19h Jun 01 '13 at 17:42

21 Answers

Another way would be to convert the stream to a promise (refer to the example below) and use then (or await) to assign the resolved value to a variable.

function streamToString (stream) {

const chunks = [];

return new Promise((resolve, reject) => {

stream.on('data', (chunk) => chunks.push(Buffer.from(chunk)));

stream.on('error', (err) => reject(err));

stream.on('end', () => resolve(Buffer.concat(chunks).toString('utf8')));

})

}

const result = await streamToString(stream)

- 13,265

- 7

- 37

- 44

-

I'm really new to streams and promises and I'm getting this error: `SyntaxError: await is only valid in async function`. What am I doing wrong? – JohnK Jul 26 '19 at 14:55

-

4You have to call the streamtostring function within a async function. To avoid this you can also do `streamToString(stream).then(function(response){//Do whatever you want with response});` – Enclo Creations Aug 04 '19 at 07:29

-

87**This should be the top answer.** Congratulations on producing the only solution that gets everything right, with (1) storing the chunks as Buffers and only calling `.toString("utf8")` at the end, to avoid the problem of a decode failure if a chunk is split in the middle of a multibyte character; (2) actual error handling; (3) putting the code in a function, so it can be reused, not copy-pasted; (4) using Promises so the function can be `await`-ed on; (5) small code that doesn't drag in a million dependencies, unlike certain npm libraries; (6) ES6 syntax and modern best practices. – MultiplyByZer0 Sep 15 '19 at 18:47

-

2

-

5After I came up with essentially the same code using current top answer as the hint, I have noticed that above code could fail with `Uncaught TypeError [ERR_INVALID_ARG_TYPE]: The "list[0]" argument must be an instance of Buffer or Uint8Array. Received type string` if the stream produces `string` chunks instead of `Buffer`. Using `chunks.push(Buffer.from(chunk))` should work with both `string` and `Buffer` chunks. – Andrei LED Jun 23 '20 at 21:53

-

9Turns out the actual best answer came late to the party: https://stackoverflow.com/a/63361543/1677656 – Rafael Beckel Dec 11 '20 at 16:23

-

`chunks.push(Buffer.from(chunk))` gives a typescript error `Argument type Buffer | string is not assignable to parameter type WithImplicitCoercion

`. Is this a valid error or are the types broken? – blub Mar 15 '21 at 18:58 -

What's the reason for [this edit](https://stackoverflow.com/revisions/49428486/3)? I write my code more similarly to the previous revision (directly passing `reject` as the callback instead of calling it)--will that run into errors? – ban_javascript Jun 05 '21 at 06:20

-

1@ban_javascript The reasoning behind the edit is that is _generally_ considered bad practice to pass a callback to a function that was not designed for it. There was nothing wrong with the previous version, though - it should work just fine. One could argue it might break if the Promise.resolve API ever changes, but that's very unlikely to happen. I've just edited it in order to encourage what I see as best practices. – Marlon Bernardes Jun 11 '21 at 07:58

-

If you attempt to read a big file (text) and do something with the data, u need to append the next chunk based on prev chunk (remaining invalid bytes) to ensure that in the case a chunk cuts off your bytes, you are re-computing them on the next read. To do that use [string_decoder](https://nodejs.org/docs/latest-v14.x/api/string_decoder.html). Here's a [usage example](https://stackoverflow.com/a/12122668/8289532) Edit: I'd advise streaming it line-by-line if you need to work with the file data: `readLine.createInterface({ input: stream, crlfDelay: Infinity })` – Marius Mircea Aug 31 '21 at 21:28

-

In my case I was happy with `const packagePath = execSync("rospack find my_package").toString()`. – Philippe May 05 '22 at 12:26

What do you think about this ?

async function streamToString(stream) {

// lets have a ReadableStream as a stream variable

const chunks = [];

for await (const chunk of stream) {

chunks.push(Buffer.from(chunk));

}

return Buffer.concat(chunks).toString("utf-8");

}

- 1,262

- 1

- 12

- 23

- 2,887

- 14

- 24

-

-

What a nice and unexpected solution! You are a Mozart of javascript streams and buffers! – zevero Nov 15 '20 at 22:27

-

5Had to use `chunks.push(Buffer.from(chunk));` to make it work with string chunks. – Jan Mar 01 '21 at 11:21

-

2Wow, this looks very neat! Does this have any problems (aside from the one mentioned in the above comment)? Can it handle errors? – ban_javascript Jun 05 '21 at 06:40

-

3This is the modern equivalent to the top answer. Whew Node.js/JS changes fast. I'd recommend using this one as opposed to the top rated one as it's much cleaner and doesn't make the user have to touch events. – Epic Speedy Jun 16 '21 at 11:38

-

-

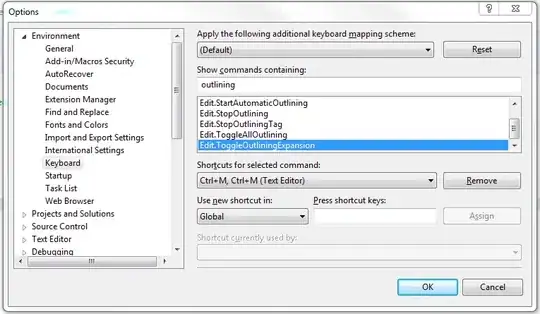

1@DirkSchumacher Your IDE either uses outdated script interpreter ([`for await` is a valid ECMAScript syntax](https://tc39.es/ecma262/multipage/ecmascript-language-statements-and-declarations.html#sec-for-in-and-for-of-statements)) or is itself outdated if it attempts to (unsuccessfully) execute some code containing `for await`. Which IDE is it? Anyway, IDEs aren't designed to actually run programs "in production", they lint them and help with analysis during development. – Armen Michaeli Aug 12 '21 at 16:40

-

@amn I am running in the most up to date IntelliJ-Ultimate. Though it is more a Java IDE it knows somewhat web stuff as well. I am sitting behind a company firewall so this and other things may be the issue. Thanks for your advise/info/idea! – Dirk Schumacher Aug 13 '21 at 08:54

-

1@DirkSchumacher No bother. Just see if you can find out _exactly_ what component of your IDE -- I assume it will be a program -- loads and executes the script containing `for await`. Query the version of the program and find out if the version actually supports the syntax. Then find out why your IDE is using the particular "outdated" version of the program and find a way to update both. – Armen Michaeli Aug 13 '21 at 10:48

-

FYI, had to `push` the chunk using `Buffer.from`, ex. `chunks.push(Buffer.from(chunk))`, but otherwise a nice and elegant solution! thanks! – thescientist Aug 18 '21 at 17:14

None of the above worked for me. I needed to use the Buffer object:

const chunks = [];

readStream.on("data", function (chunk) {

chunks.push(chunk);

});

// Send the buffer or you can put it into a var

readStream.on("end", function () {

res.send(Buffer.concat(chunks));

});

- 23,455

- 4

- 42

- 32

-

8

-

11Works great. Just a note: if you want a proper string type, you will need to call .toString() on the resulting Buffer object from concat() call – Bryan Johnson Sep 25 '17 at 14:30

-

5Turns out the actual best answer came late to the party: https://stackoverflow.com/a/63361543/1677656 – Rafael Beckel Dec 11 '20 at 16:23

-

Hope this is more useful than the above answer:

var string = '';

stream.on('data',function(data){

string += data.toString();

console.log('stream data ' + part);

});

stream.on('end',function(){

console.log('final output ' + string);

});

Note that string concatenation is not the most efficient way to collect the string parts, but it is used for simplicity (and perhaps your code does not care about efficiency).

Also, this code may produce unpredictable failures for non-ASCII text (it assumes that every character fits in a byte), but perhaps you do not care about that, either.

- 27,060

- 21

- 118

- 148

- 6,398

- 2

- 37

- 36

-

4

-

2you could use a buffer https://docs.nodejitsu.com/articles/advanced/buffers/how-to-use-buffers but it really depends on your use. – Tom Carchrae Aug 27 '15 at 13:43

-

2Use an array of strings where you append each new chunk to the array and call `join("")` on the array at the end. – Valeriu Paloş Mar 08 '16 at 07:22

-

21This isn't right. If buffer is halfway through a multi-byte code point then the toString() will receive malformed utf-8 and you will end up with a bunch of � in your string. – alextgordon Oct 16 '16 at 22:03

-

i'm not sure what you mean by a 'mutli-byte code point' but if you want to convert the encoding of the stream you can pass an encoding parameter like this `toString('utf8')` - but by default string encoding is `utf8` so i suspect that your stream may not be `utf8` @alextgordon - see http://stackoverflow.com/questions/12121775/convert-streamed-buffers-to-utf8-string for more – Tom Carchrae Oct 18 '16 at 13:41

-

3@alextgordon is right. In some very rare cases when I had a lot of chunks I got those � at the start and end of chunks. Especially when there where russian symbols on the edges. So it's correct to concat chunks and convert them on end instead of converting chunks and concatenating them. In my case the request was made from one service to another with request.js with default encoding – Mike Yermolayev Apr 22 '20 at 10:17

(This answer is from years ago, when it was the best answer. There is now a better answer below this. I haven't kept up with node.js, and I cannot delete this answer because it is marked "correct on this question". If you are thinking of down clicking, what do you want me to do?)

The key is to use the data and end events of a Readable Stream. Listen to these events:

stream.on('data', (chunk) => { ... });

stream.on('end', () => { ... });

When you receive the data event, add the new chunk of data to a Buffer created to collect the data.

When you receive the end event, convert the completed Buffer into a string, if necessary. Then do what you need to do with it.

- 33,923

- 10

- 53

- 80

-

171A couple of lines of code illustrating the answer is preferable to just pointing a link at the API. Don't disagree with the answer, just don't believe it is complete enough. – arcseldon Jun 04 '14 at 05:14

-

4With newer node.js versions, this is cleaner: http://stackoverflow.com/a/35530615/271961 – Simon A. Eugster Dec 07 '16 at 11:28

-

The answer should be updated to not recommend using a Promises library, but use native Promises. – Dan Dascalescu Mar 22 '19 at 01:27

-

@DanDascalescu I agree with you. The problem is that I wrote this answer 7 years ago, and I haven't kept up with node.js . If you are someone else would like to update it, that would be great. Or I could simply delete it, as there seems to be a better answer already. What would you recommend? – ControlAltDel Mar 25 '19 at 13:30

-

@ControlAltDel: I appreciate your initiative to delete an answer that is no longer the best. Wish others had similar [discipline](https://meta.stackexchange.com/questions/7609/what-is-the-purpose-of-the-disciplined-badge). – Dan Dascalescu Mar 26 '19 at 02:10

-

@DanDascalescu Unfortunately, when I tried to delete, SO won't let me as it is the selected answer. – ControlAltDel Mar 26 '19 at 13:57

-

Turns out the actual best answer came late to the party: https://stackoverflow.com/a/63361543/1677656 – Rafael Beckel Dec 11 '20 at 16:23

I'm using usually this simple function to transform a stream into a string:

function streamToString(stream, cb) {

const chunks = [];

stream.on('data', (chunk) => {

chunks.push(chunk.toString());

});

stream.on('end', () => {

cb(chunks.join(''));

});

}

Usage example:

let stream = fs.createReadStream('./myFile.foo');

streamToString(stream, (data) => {

console.log(data); // data is now my string variable

});

- 1,038

- 10

- 9

-

1Useful answer but it looks like each chunk must be converted to a string before it is pushed in the array: `chunks.push(chunk.toString());` – Nicolas Le Thierry d'Ennequin Jun 07 '16 at 10:06

-

1

-

1

-

1There is an edge case here when a multi-byte character lands is split between chunks. This results in the original character being replaced with two incorrect characters. – PhilB Sep 10 '22 at 02:50

And yet another one for strings using promises:

function getStream(stream) {

return new Promise(resolve => {

const chunks = [];

# Buffer.from is required if chunk is a String, see comments

stream.on("data", chunk => chunks.push(Buffer.from(chunk)));

stream.on("end", () => resolve(Buffer.concat(chunks).toString()));

});

}

Usage:

const stream = fs.createReadStream(__filename);

getStream(stream).then(r=>console.log(r));

remove the .toString() to use with binary Data if required.

update: @AndreiLED correctly pointed out this has problems with strings. I couldn't get a stream returning strings with the version of node I have, but the api notes this is possible.

- 24,254

- 2

- 93

- 76

-

I have noticed that above code could fail with `Uncaught TypeError [ERR_INVALID_ARG_TYPE]: The "list[0]" argument must be an instance of Buffer or Uint8Array. Received type string` if the stream produces `string` chunks instead of `Buffer`. Using `chunks.push(Buffer.from(chunk))` should work with both `string` and `Buffer` chunks. – Andrei LED Jun 23 '20 at 21:50

From the nodejs documentation you should do this - always remember a string without knowing the encoding is just a bunch of bytes:

var readable = getReadableStreamSomehow();

readable.setEncoding('utf8');

readable.on('data', function(chunk) {

assert.equal(typeof chunk, 'string');

console.log('got %d characters of string data', chunk.length);

})

- 762

- 9

- 29

Easy way with the popular (over 5m weekly downloads) and lightweight get-stream library:

https://www.npmjs.com/package/get-stream

const fs = require('fs');

const getStream = require('get-stream');

(async () => {

const stream = fs.createReadStream('unicorn.txt');

console.log(await getStream(stream)); //output is string

})();

- 1,182

- 10

- 16

Streams don't have a simple .toString() function (which I understand) nor something like a .toStringAsync(cb) function (which I don't understand).

So I created my own helper function:

var streamToString = function(stream, callback) {

var str = '';

stream.on('data', function(chunk) {

str += chunk;

});

stream.on('end', function() {

callback(str);

});

}

// how to use:

streamToString(myStream, function(myStr) {

console.log(myStr);

});

- 14,339

- 4

- 56

- 63

I had more luck using like that :

let string = '';

readstream

.on('data', (buf) => string += buf.toString())

.on('end', () => console.log(string));

I use node v9.11.1 and the readstream is the response from a http.get callback.

- 12,272

- 14

- 80

- 106

What about something like a stream reducer ?

Here is an example using ES6 classes how to use one.

var stream = require('stream')

class StreamReducer extends stream.Writable {

constructor(chunkReducer, initialvalue, cb) {

super();

this.reducer = chunkReducer;

this.accumulator = initialvalue;

this.cb = cb;

}

_write(chunk, enc, next) {

this.accumulator = this.reducer(this.accumulator, chunk);

next();

}

end() {

this.cb(null, this.accumulator)

}

}

// just a test stream

class EmitterStream extends stream.Readable {

constructor(chunks) {

super();

this.chunks = chunks;

}

_read() {

this.chunks.forEach(function (chunk) {

this.push(chunk);

}.bind(this));

this.push(null);

}

}

// just transform the strings into buffer as we would get from fs stream or http request stream

(new EmitterStream(

["hello ", "world !"]

.map(function(str) {

return Buffer.from(str, 'utf8');

})

)).pipe(new StreamReducer(

function (acc, v) {

acc.push(v);

return acc;

},

[],

function(err, chunks) {

console.log(Buffer.concat(chunks).toString('utf8'));

})

);

- 712

- 7

- 10

The cleanest solution may be to use the "string-stream" package, which converts a stream to a string with a promise.

const streamString = require('stream-string')

streamString(myStream).then(string_variable => {

// myStream was converted to a string, and that string is stored in string_variable

console.log(string_variable)

}).catch(err => {

// myStream emitted an error event (err), so the promise from stream-string was rejected

throw err

})

- 792

- 7

- 8

All the answers listed appear to open the Readable Stream in flowing mode which is not the default in NodeJS and can have limitations since it lacks backpressure support that NodeJS provides in Paused Readable Stream Mode. Here is an implementation using Just Buffers, Native Stream and Native Stream Transforms and support for Object Mode

import {Transform} from 'stream';

let buffer =null;

function objectifyStream() {

return new Transform({

objectMode: true,

transform: function(chunk, encoding, next) {

if (!buffer) {

buffer = Buffer.from([...chunk]);

} else {

buffer = Buffer.from([...buffer, ...chunk]);

}

next(null, buffer);

}

});

}

process.stdin.pipe(objectifyStream()).process.stdout

- 111

- 2

- 4

Even if this answer was made 10 years ago, I consider it's important to add my answer since there are a couple of popular answers that do not consider the official docs of Node.js (https://nodejs.org/api/stream.html#readablesetencodingencoding) which say:

The Readable stream will properly handle multi-byte characters delivered through the stream that would otherwise become improperly decoded if simply pulled from the stream as Buffer objects.

That's the reason I will modify the two most popular answers showing the best way to do the encoding process:

function streamToString(stream) {

stream.setEncoding('utf-8'); // do this instead of directly converting the string

const chunks = [];

return new Promise((resolve, reject) => {

stream.on('data', (chunk) => chunks.push(chunk));

stream.on('error', (err) => reject(err));

stream.on('end', () => resolve(chunks.join("")));

})

}

const result = await streamToString(stream)

or:

async function streamToString(stream) {

stream.setEncoding('utf-8'); // do this instead of directly converting the string

// input must be stream with readable property

const chunks = [];

for await (const chunk of stream) {

chunks.push(chunk);

}

return chunks.join("");

}

- 21

- 2

setEncoding('utf8');

Well done Sebastian J above.

I had the "buffer problem" with a few lines of test code I had, and added the encoding information and it solved it, see below.

Demonstrate the problem

software

// process.stdin.setEncoding('utf8');

process.stdin.on('data', (data) => {

console.log(typeof(data), data);

});

input

hello world

output

object <Buffer 68 65 6c 6c 6f 20 77 6f 72 6c 64 0d 0a>

Demonstrate the solution

software

process.stdin.setEncoding('utf8'); // <- Activate!

process.stdin.on('data', (data) => {

console.log(typeof(data), data);

});

input

hello world

output

string hello world

- 4,383

- 36

- 27

This worked for me and is based on Node v6.7.0 docs:

let output = '';

stream.on('readable', function() {

let read = stream.read();

if (read !== null) {

// New stream data is available

output += read.toString();

} else {

// Stream is now finished when read is null.

// You can callback here e.g.:

callback(null, output);

}

});

stream.on('error', function(err) {

callback(err, null);

})

- 4,722

- 4

- 31

- 30

Using the quite popular stream-buffers package which you probably already have in your project dependencies, this is pretty straightforward:

// imports

const { WritableStreamBuffer } = require('stream-buffers');

const { promisify } = require('util');

const { createReadStream } = require('fs');

const pipeline = promisify(require('stream').pipeline);

// sample stream

let stream = createReadStream('/etc/hosts');

// pipeline the stream into a buffer, and print the contents when done

let buf = new WritableStreamBuffer();

pipeline(stream, buf).then(() => console.log(buf.getContents().toString()));

- 32,721

- 10

- 101

- 130

In my case, the content type response headers was Content-Type: text/plain. So, I've read the data from Buffer like:

let data = [];

stream.on('data', (chunk) => {

console.log(Buffer.from(chunk).toString())

data.push(Buffer.from(chunk).toString())

});

- 664

- 8

- 12

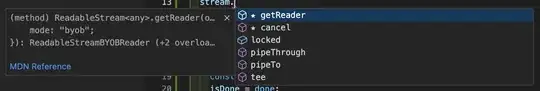

If your stream doesn't have methods like .on( and .setEncoding( then you have what the newer "web fetch standards-based" ReadableStream: https://github.com/nodejs/undici/blob/c83b084879fa0bb8e0469d31ec61428ac68160d5/README.md#responsebody

You can simply do this:

const str = await new Response(request.body).text();

(I 100% just copied this from another similar SO question: https://stackoverflow.com/a/74237249/565877)

The rest of my answer here just provides additional context on my situation, which may help catch certain keywords in google.

=====

I'm trying to convert a request.body (ReadableStream) in a SolidStart api route handler to a string: https://start.solidjs.com/core-concepts/api-routes

SolidStart takes a very isomorphic approach.

When I attempted to use the code in https://stackoverflow.com/a/63361543/565877, I got this error:

Type 'ReadableStream<any>' must have a '[Symbol.asyncIterator]()' method that returns an async iterator.ts(2504)

SolidStart uses undici as it's isomorphic fetch implementation, and newer versions of node have basically used this library for node's fetch implementation. I will be the first to admit, this doesn't seem like a "node.js stream" but in some sense it is, it's just a newer ReadableStream standard that comes with node's native fetch implementation.

For more insight, these are the methods/properties available on this request.body:

- 23,026

- 8

- 58

- 72

It's easiest using Node.js built-in streamConsumers.text:

import { text } from 'node:stream/consumers';

import { Readable } from 'node:stream';

const readable = Readable.from('Hello world from consumers!');

const string = await text(readable);

- 6,211

- 3

- 23

- 23