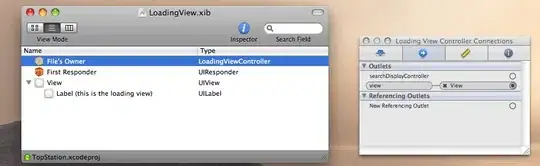

I am having some trouble with concurrent CUDA. Take a look at the attached image. The kernel is launched at the marked point, at 0.395 seconds. Then there is some green CpuWork. Finally, there is a call to cudaDeviceSynchronize. The kernels that is launched before CpuWork doesnt start before the synchronize call. Ideally, it should run in parallel with the CPU work.

void KdTreeGpu::traceRaysOnGpuAsync(int firstRayIndex, int numRays, int rank, int buffer)

{

int per_block = 128;

int num_blocks = numRays/per_block + (numRays%per_block==0?0:1);

Ray* rays = &this->deviceRayPtr[firstRayIndex];

int* outputHitPanelIds = &this->deviceHitPanelIdPtr[firstRayIndex];

kdTreeTraversal<<<num_blocks, per_block, 0>>>(sceneBoundingBox, rays, deviceNodesPtr, deviceTrianglesListPtr,

firstRayIndex, numRays, rank, rootNodeIndex,

deviceTHitPtr, outputHitPanelIds, deviceReflectionPtr);

CUDA_VALIDATE(cudaMemcpyAsync(resultHitDistances[buffer], deviceTHitPtr, numRays*sizeof(double), cudaMemcpyDeviceToHost));

CUDA_VALIDATE(cudaMemcpyAsync(resultHitPanelIds[buffer], outputHitPanelIds, numRays*sizeof(int), cudaMemcpyDeviceToHost));

CUDA_VALIDATE(cudaMemcpyAsync(resultReflections[buffer], deviceReflectionPtr, numRays*sizeof(Vector3), cudaMemcpyDeviceToHost));

}

The memcopies are async. The result buffers are allocated like this

unsigned int flag = cudaHostAllocPortable;

CUDA_VALIDATE(cudaHostAlloc(&resultHitPanelIds[0], MAX_RAYS_PER_ITERATION*sizeof(int), flag));

CUDA_VALIDATE(cudaHostAlloc(&resultHitPanelIds[1], MAX_RAYS_PER_ITERATION*sizeof(int), flag));

Hoping for a solution for this. Have tried many things, including not running in the default stream. When i added cudaHostAlloc i recognized that the async method returned back to the CPU. But that doesnt help when the kernel does not launch before the deviceSynchronize call later.

resultHitDistances[2] contains two allocated memory areas so that when 0 is read by the CPU, the GPU should put the result in 1.

Thanks!

Edit: This is the code that calls traceRaysAsync.

int numIterations = ceil(float(this->numPrimaryRays) / MAX_RAYS_PER_ITERATION);

int numRaysPrevious = min(MAX_RAYS_PER_ITERATION, this->numPrimaryRays);

nvtxRangePushA("traceRaysOnGpuAsync First");

traceRaysOnGpuAsync(0, numRaysPrevious, rank, 0);

nvtxRangePop();

for(int iteration = 0; iteration < numIterations; iteration++)

{

int rayFrom = (iteration+1)*MAX_RAYS_PER_ITERATION;

int rayTo = min((iteration+2)*MAX_RAYS_PER_ITERATION, this->numPrimaryRays) - 1;

int numRaysIteration = rayTo-rayFrom+1;

// Wait for results to finish and get them

waitForGpu();

// Trace the next iteration asynchronously. This will have data prepared for next iteration

if(numRaysIteration > 0)

{

int nextBuffer = (iteration+1) % 2;

nvtxRangePushA("traceRaysOnGpuAsync Interior");

traceRaysOnGpuAsync(rayFrom, numRaysIteration, rank, nextBuffer);

nvtxRangePop();

}

nvtxRangePushA("CpuWork");

// Store results for current iteration

int rayOffset = iteration*MAX_RAYS_PER_ITERATION;

int buffer = iteration % 2;

for(int i = 0; i < numRaysPrevious; i++)

{

if(this->activeRays[rayOffset+i] && resultHitPanelIds[buffer][i] >= 0)

{

this->activeRays[rayOffset+i] = false;

const TrianglePanelPair & t = this->getTriangle(resultHitPanelIds[buffer][i]);

double hitT = resultHitDistances[buffer][i];

Vector3 reflectedDirection = resultReflections[buffer][i];

Result res = Result(rays[rayOffset+i], hitT, t.panel);

results[rank].push_back(res);

t.panel->incrementIntensity(1.0);

if (t.panel->getParent().absorbtion < 1)

{

numberOfRaysGenerated++;

Ray reflected (res.endPoint() + 0.00001*reflectedDirection, reflectedDirection);

this->newRays[rayOffset+i] = reflected;

this->activeRays[rayOffset+i] = true;

numNewRays++;

}

}

}

numRaysPrevious = numRaysIteration;

nvtxRangePop();

}