I'm developing a routine for automatic enhancement of scanned 35 mm slides. I'm looking for a good algorithm for increasing contrast and removing color cast. The algorithm will have to be completely automatic, since there will be thousands of images to process. These are a couple of sample images straight from the scanner, only cropped and downsized for web:

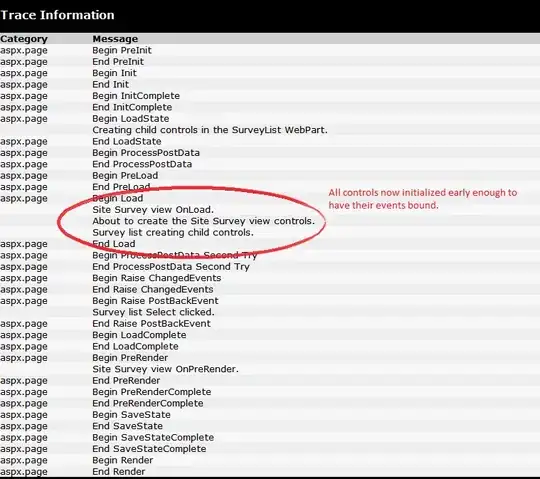

I'm using the AForge.NET library and have tried both the HistogramEqualization and ContrastStretch filters. HistogramEqualization is good for maximizing local contrast but does not produce pleasing results overall. ContrastStretch is way better, but since it stretches the histogram of each color band individually, it sometimes produces a strong color cast:

To reduce the color shift, I created a UniformContrastStretch filter myself using the ImageStatistics and LevelsLinear classes. This uses the same range for all color bands, preserving the colors at the expense of less contrast.

ImageStatistics stats = new ImageStatistics(image);

int min = Math.Min(Math.Min(stats.Red.Min, stats.Green.Min), stats.Blue.Min);

int max = Math.Max(Math.Max(stats.Red.Max, stats.Green.Max), stats.Blue.Max);

LevelsLinear levelsLinear = new LevelsLinear();

levelsLinear.Input = new IntRange(min, max);

Bitmap stretched = levelsLinear.Apply(image);

The image is still quite blue though, so I created a ColorCorrection filter that first calculates the mean luminance of the image. A gamma correction value is then calculated for each color channel, so that the mean value of each color channel will equal the mean luminance. The uniform contrast stretched image has mean values R=70 G=64 B=93, the mean luminance being (70 + 64 + 93) / 3 = 76. The gamma values are calculated to R=1.09 G=1.18 B=0.80 and the resulting, very neutral, image has mean values of R=76 G=76 B=76 as expected:

Now, getting to the real problem... I suppose correcting the mean color of the image to grey is a bit too drastic and will make some images quite dull in appearance, like the second sample (first image is uniform stretched, next is the same image color corrected):

One way to perform color correction manually in a photo editing program is to sample the color of a known neutral color (white/grey/black) and adjust the rest of the image to that. But since this routine has to be completely automatic, that is not an option.

I guess I could add a strength setting to my ColorCorrection filter, so that a strength of 0.5 will move the mean values half the distance to the mean luminance. But on the other hand, some images might do best without any color correction at all.

Any ideas for a better algoritm? Or some method to detect whether an image has a color cast or just has lots of some color, like the second sample?