kmeans returns an object containing the coordinates of the cluster centers in $centers. You want to find the cluster to which the new object is closest (in terms of the sum of squares of distances):

v <- freeny$inputsTrain[1,] # just an example

which.min( sapply( 1:10, function( x ) sum( ( v - km$centers[x,])^2 ) ) )

The above returns 8 - same as the cluster to which the first row of freeny$inputsTrain was assigned.

In an alternative approach, you can first create a clustering, and then use a supervised machine learning to train a model which you will then use as a prediction. However, the quality of the model will depend on how good the clustering really represents the data structure and how much data you have. I have inspected your data with PCA (my favorite tool):

pca <- prcomp( freeny$inputsTrain, scale.= TRUE )

library( pca3d )

pca3d( pca )

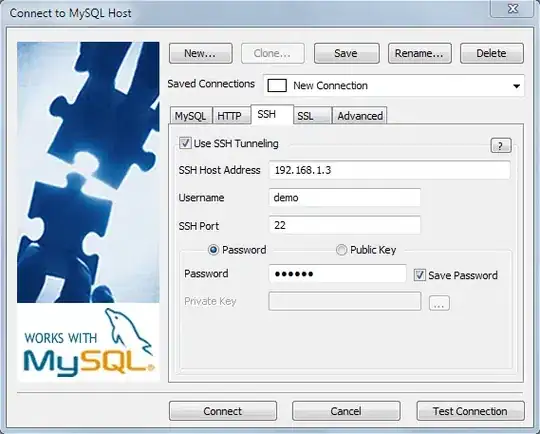

My impression is that you have at most 6-7 clear classes to work with:

However, one should run more kmeans diagnostic (elbow plots etc) to determine the optimal number of clusters:

wss <- sapply( 1:10, function( x ) { km <- kmeans(freeny$inputsTrain,x,iter.max = 100 ) ; km$tot.withinss } )

plot( 1:10, wss )

This plot suggests 3-4 classes as the optimum. For a more complex and informative approach, consult the clusterograms: http://www.r-statistics.com/2010/06/clustergram-visualization-and-diagnostics-for-cluster-analysis-r-code/