--Solution 1:

Lets think of these chunks in your sentence as beads on an abacus, with each bead consisting of a partial string, the beads can be moved left or right to generate the permutations. The position of each fragment is fixed between two adjacent fragments.

In current case, the beads would be :

(more)(recen)(t)(ly)(the)(develop)(ment,)(wh)(ich)(is)(a)(po)(ten)(t)

This solves 2 subproblems:

a) Bead is a single unit,so We do not care about permutations within the bead i.e. permutations of "more" are not possible.

b) The order of the beads is constant, only the spacing between them changes. i.e. "more" will always be before "recen" and so on.

Now, generate all the permutations of these beads , which will give output like :

morerecentlythedevelopment,which is a potent

morerecentlythedevelopment,which is a poten t

morerecentlythedevelop ment, wh ich is a po tent

morerecentlythedevelop ment, wh ich is a po ten t

morerecentlythe development,whichisapotent

Then score these permutations based on how many words from your relevant dictionary they contain, most correct results can be easily filtered out.

more recently the development, which is a potent will score higher than morerecentlythedevelop ment, wh ich is a po ten t

Code which does the permutation part of the beads:

import re

def gen_abacus_perms(frags):

if len(frags) == 0:

return []

if len(frags) == 1:

return [frags[0]]

prefix_1 = "{0}{1}".format(frags[0],frags[1])

prefix_2 = "{0} {1}".format(frags[0],frags[1])

if len(frags) == 2:

nres = [prefix_1,prefix_2]

return nres

rem_perms = gen_abacus_perms(frags[2:])

res = ["{0}{1}".format(prefix_1, x ) for x in rem_perms] + ["{0} {1}".format(prefix_1, x ) for x in rem_perms] + \

["{0}{1}".format(prefix_2, x ) for x in rem_perms] + ["{0} {1}".format(prefix_2 , x ) for x in rem_perms]

return res

broken = "more recen t ly the develop ment, wh ich is a po ten t"

frags = re.split("\s+",broken)

perms = gen_abacus_perms(frags)

print("\n".join(perms))

demo:http://ideone.com/pt4PSt

--Solution#2:

I would suggest an alternate approach which makes use of text analysis intelligence already developed by folks working on similar problems and having worked on big corpus of data which depends on dictionary and grammar .e.g. search engines.

I am not well aware of such public/paid apis, so my example is based on google results.

Lets try to use google :

- You can keep putting your invalid terms to Google, for multiple passes, and keep evaluating the results for some score based on your lookup dictionary.

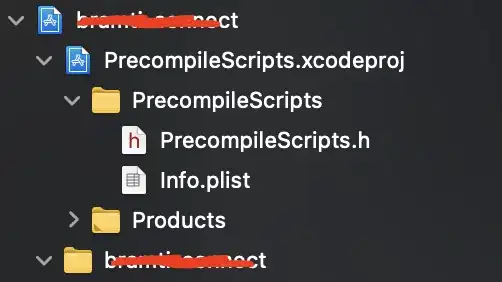

here are two relevant outputs by using 2 passes of your text :

This outout is used for a second pass :

Which gives you the conversion as ""more recently the development, which is a potent".

To verify the conversion, you will have to use some similarity algorithm and scoring to filter out invalid / not so good results.

One raw technique could be using a comparison of normalized strings using difflib.

>>> import difflib

>>> import re

>>> input = "more recen t ly the develop ment, wh ich is a po ten t "

>>> output = "more recently the development, which is a potent "

>>> input_norm = re.sub(r'\W+', '', input).lower()

>>> output_norm = re.sub(r'\W+', '', output).lower()

>>> input_norm

'morerecentlythedevelopmentwhichisapotent'

>>> output_norm

'morerecentlythedevelopmentwhichisapotent'

>>> difflib.SequenceMatcher(None,input_norm,output_norm).ratio()

1.0