I've developed a huge table in excel and now facing problem in transferring it into the postgresql database. I've downloaded the odbc software and I'm able to open table created in postgresql with excel. However, I'm not able to do it in a reverse manner which is creating a table in excel and open it in the postgresql. So I would like to know it is can be done in this way or is there any alternative ways that can create a large table with pgAdmin III cause inserting the data raw by raw is quite tedious.

-

4A common way to ingest Excel data is to export from Excel to CSV, then use Postgresql's `COPY` command to ingest that csv file. – bma Nov 18 '13 at 04:03

-

1Have a look at the "Related" section to the right of this section and you'll see some candidates that will likely answer your question. – bma Nov 18 '13 at 04:27

-

Note to self: Save as CSV, import to Datagrip by right-clicking on schema > import from data. – Janac Meena Feb 11 '22 at 22:54

12 Answers

The typical answer is this:

In Excel, File/Save As, select CSV, save your current sheet.

transfer to a holding directory on the Pg server the postgres user can access

in PostgreSQL:

COPY mytable FROM '/path/to/csv/file' WITH CSV HEADER; -- must be superuser

But there are other ways to do this too. PostgreSQL is an amazingly programmable database. These include:

Write a module in pl/javaU, pl/perlU, or other untrusted language to access file, parse it, and manage the structure.

Use CSV and the fdw_file to access it as a pseudo-table

Use DBILink and DBD::Excel

Write your own foreign data wrapper for reading Excel files.

The possibilities are literally endless....

- 7,290

- 24

- 86

- 130

- 25,424

- 6

- 65

- 182

-

thanks for your answer. New to postgres.. I have a similar case, where I have to pull data in from a excel workbook into PostGres at periodic intervals, rather than push from excel to the PostGres database. If I have to pull data from Excel into PostGres, would you run it as a separate service that runs periodically on the PostGres server? – alpha_989 Sep 29 '18 at 18:46

-

Some recommended link for `DBD::Excel` or [foreign data wrapper](https://www.postgresql.org/docs/current/ddl-foreign-data.html) that is working fine? – Peter Krauss Jun 02 '20 at 23:04

-

1Worth noting in the first set of steps that the `mytable` table needs to be created with all the necessary columns before running the `COPY` command. – Steve Chambers Mar 07 '22 at 14:42

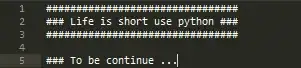

For python you could use openpyxl for all 2010 and newer file formats (xlsx).

Al Sweigart has a full tutorial from automate the boring parts on working with excel spreadsheets its very indepth and the whole book and accompanying Udemy course are great resources.

From his example

>>> import openpyxl

>>> wb = openpyxl.load_workbook('example.xlsx')

>>> wb.get_sheet_names()

['Sheet1', 'Sheet2', 'Sheet3']

>>> sheet = wb.get_sheet_by_name('Sheet3')

>>> sheet

<Worksheet "Sheet3">

Understandably once you have this access you can now use psycopg to parse the data to postgres as you normally would do.

This is a link to a list of python resources at python-excel also xlwings provides a large array of features for using python in place of vba in excel.

- 6,696

- 12

- 58

- 100

You can also use psql console to execute \copy without need to send file to Postgresql server machine. The command is the same:

\copy mytable [ ( column_list ) ] FROM '/path/to/csv/file' WITH CSV HEADER

- 20,498

- 11

- 103

- 114

- 3,880

- 1

- 27

- 21

A method that I use is to load the table into R as a data.frame, then use dbWriteTable to push it to PostgreSQL. These two steps are shown below.

Load Excel data into R

R's data.frame objects are database-like, where named columns have explicit types, such as text or numbers. There are several ways to get a spreadsheet into R, such as XLConnect. However, a really simple method is to select the range of the Excel table (including the header), copy it (i.e. CTRL+C), then in R use this command to get it from the clipboard:

d <- read.table("clipboard", header=TRUE, sep="\t", quote="\"", na.strings="", as.is=TRUE)

If you have RStudio, you can easily view the d object to make sure it is as expected.

Push it to PostgreSQL

Ensure you have RPostgreSQL installed from CRAN, then make a connection and send the data.frame to the database:

library(RPostgreSQL)

conn <- dbConnect(PostgreSQL(), dbname="mydb")

dbWriteTable(conn, "some_table_name", d)

Now some_table_name should appear in the database.

Some common clean-up steps can be done from pgAdmin or psql:

ALTER TABLE some_table_name RENAME "row.names" TO id;

ALTER TABLE some_table_name ALTER COLUMN id TYPE integer USING id::integer;

ALTER TABLE some_table_name ADD PRIMARY KEY (id);

- 41,085

- 18

- 152

- 203

As explained here http://www.postgresonline.com/journal/categories/journal/archives/339-OGR-foreign-data-wrapper-on-Windows-first-taste.html

With ogr_fdw module, its possible to open the excel sheet as foreign table in pgsql and query it directly like any other regular tables in pgsql. This is useful for reading data from the same regularly updated table

To do this, the table header in your spreadsheet must be clean, the current ogr_fdw driver can't deal with wide-width character or new lines etc. with these characters, you will probably not be able to reference the column in pgsql due to encoding issue. (Major reason I can't use this wonderful extension.)

The ogr_fdw pre-build binaries for windows are located here http://winnie.postgis.net/download/windows/pg96/buildbot/extras/ change the version number in link to download corresponding builds. extract the file to pgsql folder to overwrite the same name sub-folders. restart pgsql. Before the test drive, the module needs to be installed by executing:

CREATE EXTENSION ogr_fdw;

Usage in brief:

use ogr_fdw_info.exe to prob the excel file for sheet name list

ogr_fdw_info -s "C:/excel.xlsx"use "ogr_fdw_info.exe -l" to prob a individual sheet and generate a table definition code.

ogr_fdw_info -s "C:/excel.xlsx" -l "sheetname"

Execute the generated definition code in pgsql, a foreign table is created and mapped to your excel file. it can be queried like regular tables.

This is especially useful, if you have many small files with the same table structure. Just change the path and name in definition, and update the definition will be enough.

This plugin supports both XLSX and XLS file. According to the document it also possible to write data back into the spreadsheet file, but all the fancy formatting in your excel will be lost, the file is recreated on write.

If the excel file is huge. This will not work. which is another reason I didn't use this extension. It load data in one time. But this extension also support ODBC interface, it should be possible to use windows' ODBC excel file driver to create a ODBC source for the excel file and use ogr_fdw or any other pgsql's ODBC foreign data wrapper to query this intermediate ODBC source. This should be fairly stable.

The downside is that you can't change file location or name easily within pgsql like in the previous approach.

A friendly reminder. The permission issue applies to this fdw extensions. since its loaded into pgsql service. pgsql must have access privileged to the excel files.

- 1,133

- 1

- 15

- 30

It is possible using ogr2ogr:

C:\Program Files\PostgreSQL\12\bin\ogr2ogr.exe -f "PostgreSQL" PG:"host=someip user=someuser dbname=somedb password=somepw" C:/folder/excelfile.xlsx -nln newtablenameinpostgres -oo AUTODETECT_TYPE=YES

(Not sure if ogr2ogr is included in postgres installation or if I got it with postgis extension.)

- 1,345

- 3

- 16

- 36

-

great, but unfortunately ogr2ogr will not use the excel column types, so a character id which has first rows looking like integer, will be converted badly. Perhaps some additional -lco could save the day? – Jan May 19 '21 at 09:43

I recently discovered https://sqlizer.io, it creates insert statements from an Excel file, supports MySQL and PostgreSQL. Not sure about if it supports large files though.

- 859

- 1

- 12

- 28

I have used Excel/PowerPivot to create the postgreSQL insert statement. Seems like overkill, except when you need to do it over and over again. Once the data is in the PowerPivot window, I add successive columns with concatenate statements to 'build' the insert statement. I create a flattened pivot table with that last and final column. Copy and paste the resulting insert statement into my EXISTING postgreSQL table with pgAdmin.

Example two column table (my table has 30 columns from which I import successive contents over and over with the same Excel/PowerPivot.)

Column1 {a,b,...} Column2 {1,2,...}

In PowerPivot I add calculated columns with the following commands:

Calculated Column 1 has "insert into table_name values ('"

Calculated Column 2 has CONCATENATE([Calculated Column 1],CONCATENATE([Column1],"','"))

...until you get to the last column and you need to terminate the insert statement:

Calculated Column 3 has CONCATENATE([Calculated Column 2],CONCATENATE([Column2],"');"

Then in PowerPivot I add a flattened pivot table and have all of the insert statement that I just copy and paste to pgAgent.

Resulting insert statements:

insert into table_name values ('a','1');

insert into table_name values ('b','2');

insert into table_name values ('c','3');

NOTE: If you are familiar with the power pivot CONCATENATE statement, you know that it can only handle 2 arguments (nuts). Would be nice if it allowed more.

- 5,271

- 9

- 40

- 61

You can handle loading the excel file content by writing Java code using Apache POI library (https://poi.apache.org/). The library is developed for working with MS office application data including Excel.

I have recently created the application based on the technology that will help you to load Excel files to the Postgres database. The application is available under http://www.abespalov.com/. The application is tested only for Windows, but should work for Linux as well.

The application automatically creates necessary tables with the same columns as in the Excel files and populate the tables with content. You can export several files in parallel. You can skip the step to convert the files into the CSV format. The application handles the xls and xlsx formats.

Overall application stages are :

- Load the excel file content. Here is the code depending on file extension:

{

fileExtension = FilenameUtils.getExtension(inputSheetFile.getName());

if (fileExtension.equalsIgnoreCase("xlsx")) {

workbook = createWorkbook(openOPCPackage(inputSheetFile));

} else {

workbook =

createWorkbook(openNPOIFSFileSystemPackage(inputSheetFile));

}

sheet = workbook.getSheetAt(0);

}

- Establish Postgres JDBC connection

- Create a Postgres table

- Iterate over the sheet and inset rows into the table. Here is a piece of Java code :

{

Iterator<Row> rowIterator = InitInputFilesImpl.sheet.rowIterator();

//skip a header

if (rowIterator.hasNext()) {

rowIterator.next();

}

while (rowIterator.hasNext()) {

Row row = (Row) rowIterator.next();

// inserting rows

}

}

Here you can find all Java code for the application created for exporting excel to Postgres (https://github.com/palych-piter/Excel2DB).

- 51

- 6

The post below mentioned Datagrip, PG-Admin has the exact same feature.

I created a table with columns that matched the headers in my csv file and then right-click on the table and import/export data.

Then adjust the options and columns. If there is an error try removing all the columns from the columns list and importing all.

Then adjust the options and columns. If there is an error try removing all the columns from the columns list and importing all.

- 21

- 2

the simplest answer is to use the psql command: it's free and is include////

psql -U postgres -p 5432 -f sql-command-file.sql

- 1

- 1

-

Your answer could be improved with additional supporting information. Please [edit] to add further details, such as citations or documentation, so that others can confirm that your answer is correct. You can find more information on how to write good answers [in the help center](/help/how-to-answer). – Community Jan 29 '22 at 09:44

-

The simple answer is all that is need and what I use the most on POSTGRESS, the command line is EXTREMELY powerful and offers simple commands for very complex SQL actions. – user17368948 Jan 31 '22 at 18:05

You can do that easily by DataGrip .

- First save your excel file as csv formate . Open the excel file then SaveAs as csv format

- Go to datagrip then create the table structure according to the csv file . Suggested create the column name as the column name as Excel column

- right click on the table name from the list of table name of your database then click of the import data from file . Then select the converted csv file .

.

- 10,560

- 2

- 37

- 40