I've been experimenting with the GPU support of Matlab (v2014a). The notebook I'm using to test my code has a NVIDIA 840M build in.

Since I'm new to GPU computing with Matlab, I started out with a few simple examples and observed a strange scalability behavior. Instead of increasing the size of my problem, I simply put a forloop around my computation. I expected the time for the computation, to scale with the number of iterations, since the problem size itself does not increase. This was also true for smaller numbers of iterations, however at a certain point the time does not scale as expected, instead I observe a huge increase in computation time. Afterwards, the problem continues to scale again as expected.

The code example started from a random walk simulation, but I tried to produce an example that is easy and still shows the problem.

Here's what my code does. I initialize two matrices as sin(alpha)and cos(alpha). Then I loop over the number of iterations from 2**1to 2**15. I then repead the computation sin(alpha)^2 and cos(alpha)^2and add them up (this was just to check the result). I perform this calculation as often as the number of iterations suggests.

function gpu_scale

close all

NP = 10000;

NT = 1000;

ALPHA = rand(NP,NT,'single')*2*pi;

SINALPHA = sin(ALPHA);

COSALPHA = cos(ALPHA);

GSINALPHA = gpuArray(SINALPHA); % move array to gpu

GCOSALPHA = gpuArray(COSALPHA);

PMAX=15;

for P = 1:PMAX;

for i=1:2^P

GX = GSINALPHA.^2;

GY = GCOSALPHA.^2;

GZ = GX+GY;

end

end

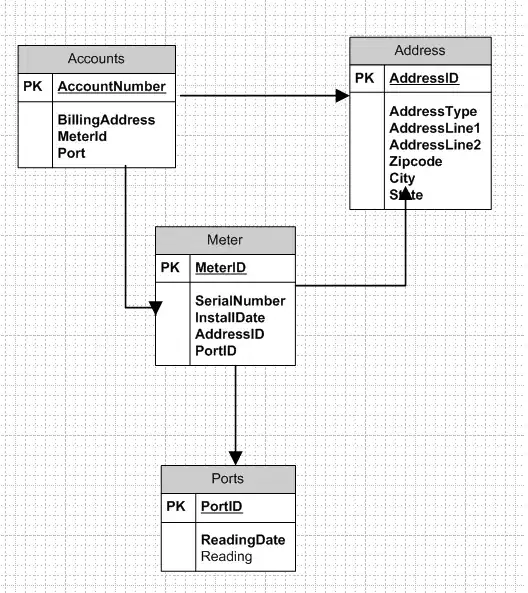

The following plot, shows the computation time in a log-log plot for the case that I always double the number of iterations. The jump occurs when doubling from 1024 to 2048 iterations.

The initial bump for two iterations might be due to initialization and is not really relevant anyhow.

I see no reason for the jump between 2**10 and 2**11 computations, since the computation time should only depend on the number of iterations.

My question: Can somebody explain this behavior to me? What is happening on the software/hardware side, that explains this jump?

Thanks in advance!

EDIT: As suggested by Divakar, I changed the way I time my code. I wasn't sure I was using gputimeit correctly. however MathWorks suggests another possible way, namely

gd= gpuDevice();

tic

% the computation

wait(gd);

Time = toc;

Using this way to measure my performance, the time is significantly slower, however I don't observe the jump in the previous plot. I added the CPU performance for comparison and keept both timings for the GPU (wait / no wait), which can be seen in the following plot

It seems, that the observed jump "corrects" the timining in the direction of the case where I used wait. If I understand the problem correctly, then the good performance in the no wait case is due to the fact, that we do not wait for the GPU to finish completely. However, then I still don't see an explanation for the jump.

Any ideas?