I have found a solution that gives reasonable results for a variety of inputs.

I start by fitting a model -- once to the low ends of the constraints, and again to the high ends.

I'll refer to the mean of these two fitted functions as the "ideal function".

I use this ideal function to extrapolate to the left and to the right of where the constraints end, as well as to interpolate between any gaps in the constraints.

I compute values for the ideal function at regular intervals, including all the constraints, from where the function is nearly zero on the left to where it's nearly one on the right.

At the constraints, I clip these values as necessary to satisfy the constraints.

Finally, I construct an interpolating function that goes through these values.

My Mathematica implementation follows.

First, a couple helper functions:

(* Distance from x to the nearest member of list l. *)

listdist[x_, l_List] := Min[Abs[x - #] & /@ l]

(* Return a value x for the variable var such that expr/.var->x is at least (or

at most, if dir is -1) t. *)

invertish[expr_, var_, t_, dir_:1] := Module[{x = dir},

While[dir*(expr /. var -> x) < dir*t, x *= 2];

x]

And here's the main function:

(* Return a non-decreasing interpolating function that maps from the

reals to [0,1] and that is as close as possible to expr[var] without

violating the given constraints (a list of {x,ymin,ymax} triples).

The model, expr, will have free parameters, params, so first do a

model fit to choose the parameters to satisfy the constraints as well

as possible. *)

cfit[constraints_, expr_, params_, var_] :=

Block[{xlist,bots,tops,loparams,hiparams,lofit,hifit,xmin,xmax,gap,aug,bests},

xlist = First /@ constraints;

bots = Most /@ constraints; (* bottom points of the constraints *)

tops = constraints /. {x_, _, ymax_} -> {x, ymax};

(* fit a model to the lower bounds of the constraints, and

to the upper bounds *)

loparams = FindFit[bots, expr, params, var];

hiparams = FindFit[tops, expr, params, var];

lofit[z_] = (expr /. loparams /. var -> z);

hifit[z_] = (expr /. hiparams /. var -> z);

(* find x-values where the fitted function is very close to 0 and to 1 *)

{xmin, xmax} = {

Min@Append[xlist, invertish[expr /. hiparams, var, 10^-6, -1]],

Max@Append[xlist, invertish[expr /. loparams, var, 1-10^-6]]};

(* the smallest gap between x-values in constraints *)

gap = Min[(#2 - #1 &) @@@ Partition[Sort[xlist], 2, 1]];

(* augment the constraints to fill in any gaps and extrapolate so there are

constraints everywhere from where the function is almost 0 to where it's

almost 1 *)

aug = SortBy[Join[constraints, Select[Table[{x, lofit[x], hifit[x]},

{x, xmin,xmax, gap}],

listdist[#[[1]],xlist]>gap&]], First];

(* pick a y-value from each constraint that is as close as possible to

the mean of lofit and hifit *)

bests = ({#1, Clip[(lofit[#1] + hifit[#1])/2, {#2, #3}]} &) @@@ aug;

Interpolation[bests, InterpolationOrder -> 3]]

For example, we can fit to a lognormal, normal, or logistic function:

g1 = cfit[constraints, CDF[LogNormalDistribution[mu,sigma], z], {mu,sigma}, z]

g2 = cfit[constraints, CDF[NormalDistribution[mu,sigma], z], {mu,sigma}, z]

g3 = cfit[constraints, 1/(1 + c*Exp[-k*z]), {c,k}, z]

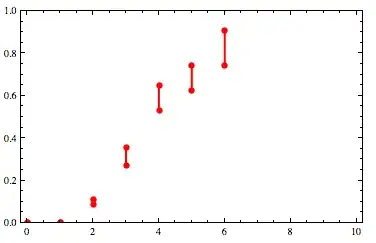

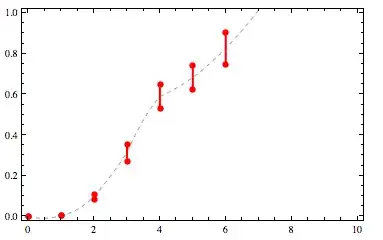

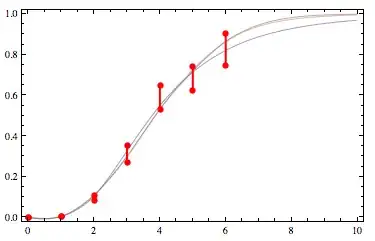

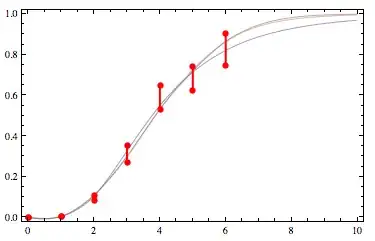

Here's what those look like for my original list of example constraints:

(source: yootles.com)

The normal and logistic are nearly on top of each other and the lognormal is the blue curve.

These are not quite perfect.

In particular, they aren't quite monotone.

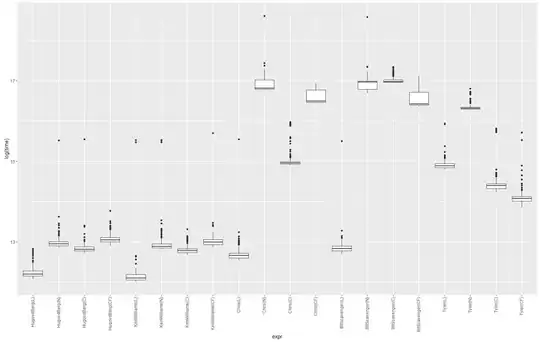

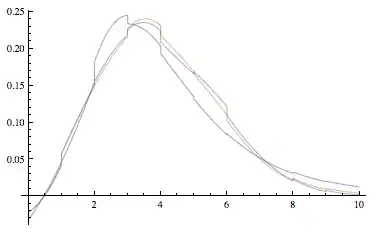

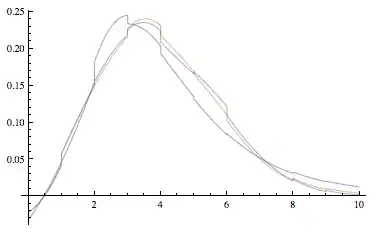

Here's a plot of the derivatives:

Plot[{g1'[x], g2'[x], g3'[x]}, {x, 0, 10}]

(source: yootles.com)

That reveals some lack of smoothness as well as the slight non-monotonicity near zero.

I welcome improvements on this solution!