I'm testing how reading multiple streams of data affects a CPUs caching performance. I'm using the following code to benchmark this. The benchmark reads integers stored sequentially in memory and writes partial sums back sequentially. The number of sequential blocks that are read from is varied. Integers from the blocks are read in a round-robin manner.

#include <iostream>

#include <vector>

#include <chrono>

using std::vector;

void test_with_split(int num_arrays) {

int num_values = 100000000;

// Fix up the number of values. The effect of this should be insignificant.

num_values -= (num_values % num_arrays);

int num_values_per_array = num_values / num_arrays;

// Initialize data to process

auto results = vector<int>(num_values);

auto arrays = vector<vector<int>>(num_arrays);

for (int i = 0; i < num_arrays; ++i) {

arrays.emplace_back(num_values_per_array);

}

for (int i = 0; i < num_values; ++i) {

arrays[i%num_arrays].emplace_back(i);

results.emplace_back(0);

}

// Try to clear the cache

const int size = 20*1024*1024; // Allocate 20M. Set much larger then L2

char *c = (char *)malloc(size);

for (int i = 0; i < 100; i++)

for (int j = 0; j < size; j++)

c[j] = i*j;

free(c);

auto start = std::chrono::high_resolution_clock::now();

// Do the processing

int sum = 0;

for (int i = 0; i < num_values; ++i) {

sum += arrays[i%num_arrays][i/num_arrays];

results[i] = sum;

}

std::cout << "Time with " << num_arrays << " arrays: " << std::chrono::duration_cast<std::chrono::milliseconds>(std::chrono::high_resolution_clock::now() - start).count() << " ms\n";

}

int main() {

int num_arrays = 1;

while (true) {

test_with_split(num_arrays++);

}

}

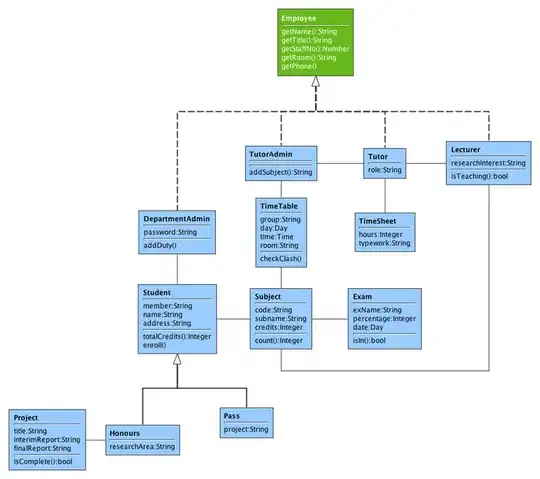

Here are the timings for splitting 1-80 ways on an Intel Core 2 Quad CPU Q9550 @ 2.83GHz:

The bump in the speed soon after 8 streams makes sense to me, as the processor has an 8-way associative L1 cache. The 24-way associative L2 cache in turn explains the bump at 24 streams. These especially hold if I'm getting the same effects as in Why is one loop so much slower than two loops?, where multiple big allocations always end up in the same associativity set. To compare I've included at the end timings when the allocation is done in one big block.

However, I don't fully understand the bump from one stream to two streams. My own guess would be that it has something to do with prefetching to L1 cache. Reading the Intel 64 and IA-32 Architectures Optimization Reference Manual it seems that the L2 streaming prefetcher supports tracking up to 32 streams of data, but no such information is given for the L1 streaming prefetcher. Is the L1 prefetcher unable to keep track of multiple streams, or is there something else at play here?

Background

I'm investigating this because I want to understand how organizing entities in a game engine as components in the structure-of-arrays style affects performance. For now it seems that the data required by a transformation being in two components vs. it being in 8-10 components won't matter much with modern CPUs. However, the testing above suggests that sometimes it might make sense to avoid splitting some data into multiple components if that would allow a "bottlenecking" transformation to only use one component, even if this means that some other transformation would have to read data it is not interested in.

Allocating in one block

Here are the timings if instead allocating multiple blocks of data only one is allocated and accessed in a strided manner. This does not change the bump from one stream to two, but I've included it for sake of completeness.

And here is the modified code for that:

void test_with_split(int num_arrays) {

int num_values = 100000000;

num_values -= (num_values % num_arrays);

int num_values_per_array = num_values / num_arrays;

// Initialize data to process

auto results = vector<int>(num_values);

auto array = vector<int>(num_values);

for (int i = 0; i < num_values; ++i) {

array.emplace_back(i);

results.emplace_back(0);

}

// Try to clear the cache

const int size = 20*1024*1024; // Allocate 20M. Set much larger then L2

char *c = (char *)malloc(size);

for (int i = 0; i < 100; i++)

for (int j = 0; j < size; j++)

c[j] = i*j;

free(c);

auto start = std::chrono::high_resolution_clock::now();

// Do the processing

int sum = 0;

for (int i = 0; i < num_values; ++i) {

sum += array[(i%num_arrays)*num_values_per_array+i/num_arrays];

results[i] = sum;

}

std::cout << "Time with " << num_arrays << " arrays: " << std::chrono::duration_cast<std::chrono::milliseconds>(std::chrono::high_resolution_clock::now() - start).count() << " ms\n";

}

Edit 1

I made sure that the 1 vs 2 splits difference was not due to the compiler unrolling the loop and optimizing the first iteration differently. Using the __attribute__ ((noinline)) I made sure the work function is not inlined into the main function. I verified that it did not happen by looking at the generated assembly. The timings after these changed were the same.