Under the covers, UIGraphicsBeginImageContext creates a CGBitmapContext. You can get access to the context's pixel storage using CGBitmapContextGetData. The problem with this approach is that the UIGraphicsBeginImageContext function chooses the byte order and color space used to store the pixel data. Those choices (particularly the byte order) could change in future versions of iOS (or even on different devices).

So instead, let's create the context directly with CGBitmapContextCreate, so we can be sure of the byte order and color space.

In my playground, I've added a test image named pic@2x.jpeg.

import XCPlayground

import UIKit

let image = UIImage(named: "pic.jpeg")!

XCPCaptureValue("image", value: image)

Here's how we create the bitmap context, taking the image scale into account (which you didn't do in your question):

let rowCount = Int(image.size.height * image.scale)

let columnCount = Int(image.size.width * image.scale)

let stride = 64 * ((columnCount * 4 + 63) / 64)

let context = CGBitmapContextCreate(nil, columnCount, rowCount, 8, stride,

CGColorSpaceCreateDeviceRGB(),

CGBitmapInfo.ByteOrder32Little.rawValue |

CGImageAlphaInfo.PremultipliedLast.rawValue)

Next, we adjust the coordinate system to match what UIGraphicsBeginImageContextWithOptions would do, so that we can draw the image correctly and easily:

CGContextTranslateCTM(context, 0, CGFloat(rowCount))

CGContextScaleCTM(context, image.scale, -image.scale)

UIGraphicsPushContext(context!)

image.drawAtPoint(CGPointZero)

UIGraphicsPopContext()

Note that UIImage.drawAtPoint takes image.orientation into account. CGContextDrawImage does not.

Now let's get a pointer to the raw pixel data from the context. The code is clearer if we define a structure to access the individual components of each pixel:

struct Pixel {

var a: UInt8

var b: UInt8

var g: UInt8

var r: UInt8

}

let pixels = UnsafeMutablePointer<Pixel>(CGBitmapContextGetData(context))

Note that the order of the Pixel members is defined to match the specific bits I set in the bitmapInfo argument to CGBitmapContextCreate.

Now we can loop over the pixels. Note that we use rowCount and columnCount, computed above, to visit all the pixels, regardless of the image scale:

for y in 0 ..< rowCount {

if y % 2 == 0 {

for x in 0 ..< columnCount {

let pixel = pixels.advancedBy(y * stride / sizeof(Pixel.self) + x)

pixel.memory.r = 255

}

}

}

Finally, we get a new image from the context:

let newImage = UIImage(CGImage: CGBitmapContextCreateImage(context)!, scale: image.scale, orientation: UIImageOrientation.Up)

XCPCaptureValue("newImage", value: newImage)

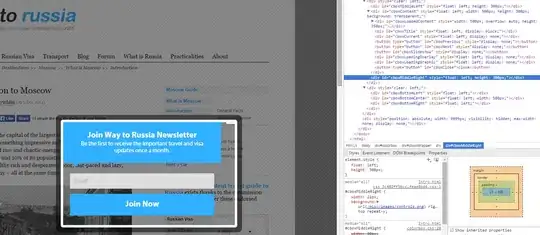

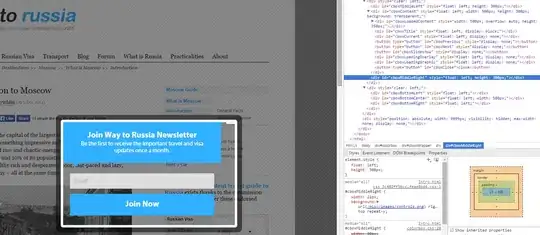

The result, in my playground's timeline:

Finally, note that if your images are large, going through pixel by pixel can be slow. If you can find a way to perform your image manipulation using Core Image or GPUImage, it'll be a lot faster. Failing that, using Objective-C and manually vectorizing it (using NEON intrinsics) may provide a big boost.