Multiple methods

There is a number of methods for rendering and storing 3D graphics and models. There are even different methods for rendering 2D graphics! In addition to 2D bitmaps, you also have SVG. SVG uses numbers to define points in an image. These points make shapes. The points can also define curves. This allows you to make images without the need for pixels. The result can be smaller file sizes, in addition to the ability to transform the image (scale and rotate) without causing distortion. Most 3D graphics use a similar technique, except in 3D. What these methods have in common, however, is that they all ultimately render the data to a 2D grid of pixels.

Projection

The most common method for rendering 3D models is projection. All of the shapes to be rendered are broken down into triangles before rendering. Why triangles? Because triangles are guaranteed to be coplanar. That saves a lot of work for the renderer since it doesn't have to worry about "coloring outside of the lines". One drawback to this is that most 3D graphics projection technologies don't support perfect spheres or other round surfaces. You have to use approximations and other tricks to make round surfaces (although there are some renderers which support round surfaces). The next step is to convert or project all of the 3D points into 2D points on the screen (as seen below).

From there, you essentially "color in" the triangles to make everything look solid. While this is pretty fast, another downside is that you can't really have things like reflections and refractions. Anytime you see a refractive or reflective surface in a game, they are only using trickery to make it look like a reflective or refractive material. The same goes for lighting and shading.

Here is an example of special coloring being used to make a sphere approximation look smooth. Notice that you can still see straight lines around the smoothed version:

Ray tracing

You also can render polygons using ray tracing. With this method, you basically trace the paths that the light takes to reach the camera. This allows you to make realistic reflections and refractions. However, I won't go into detail since it is too slow to realistically use in games currently. It is mainly used for 3D animations (like what Pixar makes). Simple scenes with low quality settings can be ray traced pretty quickly. But with complicated, realistic scenes, rendering can take several hours for a single frame (as is the case with Pixar movies). However, it does produce ultra realistic images:

Ray casting

Ray casting is not to be confused with the above-mentioned ray tracing. Ray casting does not trace the light paths. That means that you only have flat surfaces; not reflective. It also does not produce realistic light. However, this can be done relatively quickly, since in most cases you don't even need to cast a ray for every pixel. This is the method that was used for early games such as Doom and Wolfenstein 3D. In early games, ray casting was used for the maps, and the characters and other items were rendered using 2D sprites that were always facing the camera. The sprites were drawn from a few different angles to make them look 3D. Here is an image of Wolfenstein 3D:

Castle Wolfenstein with JavaScript and HTML5 Canvas: Image by Martin Kliehm

Storing the data

3D data can be stored using multiple methods. It is not necessarily dependent on the rendering method that is used. The stored data doesn't mean anything by itself, so you have to render it using one of the methods that have already been mentioned.

Polygons

This is similar to SVG. It is also the most common method for storing model data. You define the geometry using 3D points. These points can have other properties, such as texture data (in the form of UV mapping), color data, and whatever else you might want.

The data can be stored using a number of file formats. A common file format that is used is COLLADA, which is an XML file that stores the 3D data. There are a lot of other formats though. Fundamentally, however, all file formats are still storing the 3D data.

Here is an example of a polygon model:

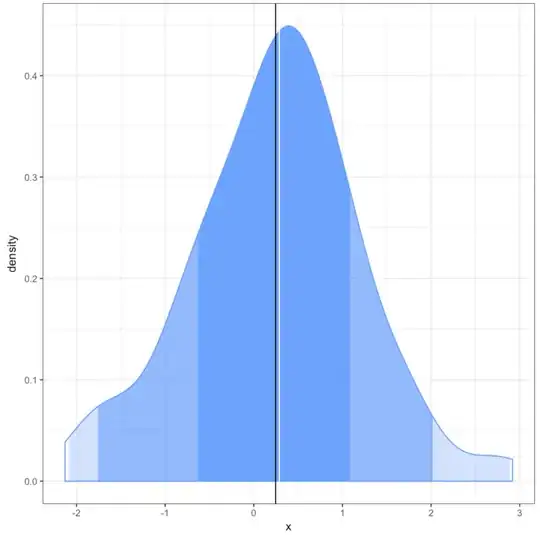

Voxels

This method is pretty simple. You can think of voxel models like bitmaps, except they are a bunch of bitmaps layered together to make 3D bitmaps. So you have a 3D grid of pixels. One way of rendering voxels is converting the voxel points to 3D cubes. Note that voxels do not have to be rendered as cubes, however. Like pixels, they are only points that may have color data which can be interpreted in different ways. I won't go into much detail since this isn't too common and you generally render the voxels with polygon methods (like when you render them as cubes. Here is an example of a voxel model:

Image by Wikipedia user Vossman