You are correct in every sense, that you provide a counter example to the statement. If it is for exam, then period, it should grant you full mark.

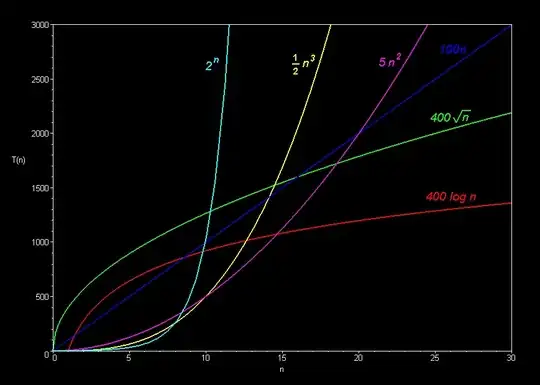

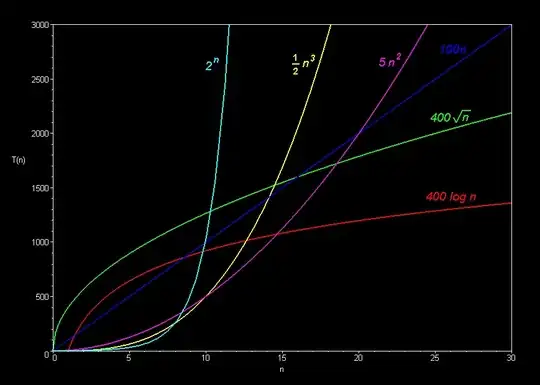

Yet for a better understanding about big-O notation and complexity stuff, I will share my own reasoning below. I also suggest you to always think the following graph when you are confused, especially the O(n) and O(n^2) line:

Big-O notation

My own reasoning when I first learnt computational complexity is that,

Big-O notation is saying for sufficient large size input, "sufficient" depends on the exact formula (Using the graph, n = 20 when compared O(n) & O(n^2) line), a higher order one will always be slower than lower order one

That means, for small input, there is no guarantee a higher order complexity algorithm will run slower than lower order one.

But Big-O notation tells you an information: When the input size keeping increasing, keep increasing....until a "sufficient" size, after that point, a higher order complexity algorithm will be always slower. And such a "sufficient" size is guaranteed to exist*.

Worst-time complexity

While Big-O notation provides a upper bound of the running time of an algorithm, depends on the structure of the input and the implementation of the algorithm, it may generally have a best complexity, average complexity and worst complexity.

The famous example is sorting algorithm: QuickSort vs MergeSort!

QuickSort, with a worst case of O(n^2)

MergeSort, with a worst case of O(n lg n)

However, Quick Sort is basically always faster than Merge Sort!

So, if your question is about Worst Case Complexity, quick sort & merge sort maybe the best counter example I can think of (Because both of them are common and famous)

Therefore, combine two parts, no matter from the point of view of input size, input structure, algorithm implementation, the answer to your question is NO.