I am trying to do OCR from this toy example of Receipts. Using Python 2.7 and OpenCV 3.1.

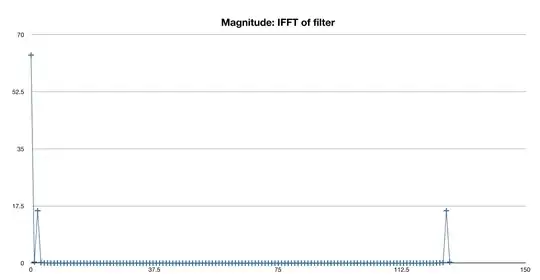

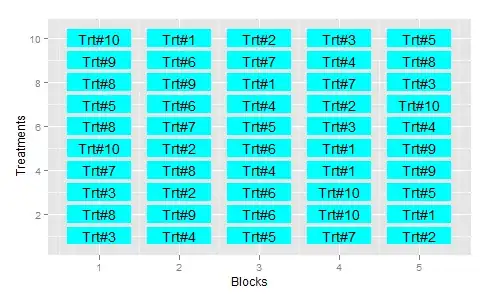

Grayscale + Blur + External Edge Detection + Segmentation of each area in the Receipts (for example "Category" to see later which one is marked -in this case cash-).

I find complicated when the image is "skewed" to be able to properly transform and then "automatically" segment each segment of the receipts.

Example:

Any suggestion?

The code below is an example to get until the edge detection, but when the receipt is like the first image. My issue is not the Image to text. Is the pre-processing of the image.

Any help more than appreciated! :)

import os;

os.chdir() # Put your own directory

import cv2

import numpy as np

image = cv2.imread("Rent-Receipt.jpg", cv2.IMREAD_GRAYSCALE)

blurred = cv2.GaussianBlur(image, (5, 5), 0)

#blurred = cv2.bilateralFilter(gray,9,75,75)

# apply Canny Edge Detection

edged = cv2.Canny(blurred, 0, 20)

#Find external contour

(_,contours, _) = cv2.findContours(edged, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_NONE)