I am trying to approximate a sine function with a neural network (Keras).

Yes, I read the related posts :)

Using four hidden neurons with sigmoid and an output layer with linear activation works fine.

But there are also settings that provide results that seem strange to me.

Since I am just started to work with I am interested in what and why things happen, but I could not figure that out so far.

# -*- coding: utf-8 -*-

import numpy as np

np.random.seed(7)

from keras.models import Sequential

from keras.layers import Dense

import pylab as pl

from sklearn.preprocessing import MinMaxScaler

X = np.linspace(0.0 , 2.0 * np.pi, 10000).reshape(-1, 1)

Y = np.sin(X)

x_scaler = MinMaxScaler()

#y_scaler = MinMaxScaler(feature_range=(-1.0, 1.0))

y_scaler = MinMaxScaler()

X = x_scaler.fit_transform(X)

Y = y_scaler.fit_transform(Y)

model = Sequential()

model.add(Dense(4, input_dim=X.shape[1], kernel_initializer='uniform', activation='relu'))

# model.add(Dense(4, input_dim=X.shape[1], kernel_initializer='uniform', activation='sigmoid'))

# model.add(Dense(4, input_dim=X.shape[1], kernel_initializer='uniform', activation='tanh'))

model.add(Dense(1, kernel_initializer='uniform', activation='linear'))

model.compile(loss='mse', optimizer='adam', metrics=['mae'])

model.fit(X, Y, epochs=500, batch_size=32, verbose=2)

res = model.predict(X, batch_size=32)

res_rscl = y_scaler.inverse_transform(res)

Y_rscl = y_scaler.inverse_transform(Y)

pl.subplot(211)

pl.plot(res_rscl, label='ann')

pl.plot(Y_rscl, label='train')

pl.xlabel('#')

pl.ylabel('value [arb.]')

pl.legend()

pl.subplot(212)

pl.plot(Y_rscl - res_rscl, label='diff')

pl.legend()

pl.show()

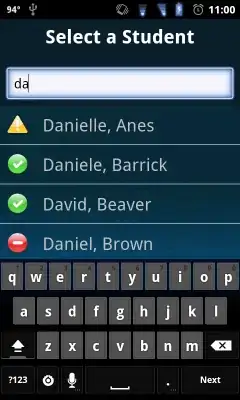

This is the result for four hidden neurons (ReLU) and linear output activation.

Why does the result take the shape of the ReLU?

Does this have something to do with the output normalization?