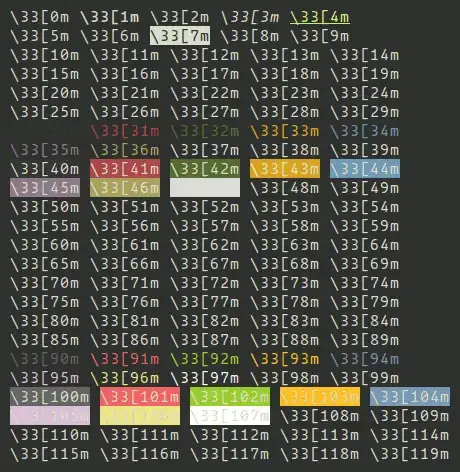

This is the original screenshot and I cropped the image into 4 parts and cleared the background of the image to the extent that I can possibly do but tesseract only detects the last column here and ignores the rest.

The output from the tesseract is shown as it is there are blank spaces which I remove while processing result

Femme—Fatale.

DaRkLoRdEIa

aChineseN1gg4

Noob_Diablo_

The output from the tesseract is shown as it is there are blank spaces which I remove while processing result

Kicked.

NosNoel

ChikiZD

Death_Eag|e_42

Chai—.

3579 10 1 7 148

2962 3 O 7 101

2214 2 2 7 99

2205 1 3 6 78

8212

7198

6307

5640

4884

15

40

40

6O

80

80

Am just dumping the output of

result = `pytesseract.image_to_string(Image.open("D:/newapproach/B&W"+str(i)+".jpg"),lang="New_Language")`

But I do not know how to proceed from here to get a consistent result.Is there anyway so that I can force the tesseract to recognize the text area and make it scan that.Because in trainer (SunnyPage), tesseract on default recognition scan it fails to recognize some areas but once I select the manually everything is detected and translated to text correctly