What is the easiest way to use packages such as NumPy and Pandas within the new ETL tool on AWS called Glue? I have a completed script within Python I would like to run in AWS Glue that utilizes NumPy and Pandas.

-

can you provide the link for pandas library (jar file) that I can add to glue job as dependent jars. Thanks – Yuva Mar 13 '18 at 17:10

13 Answers

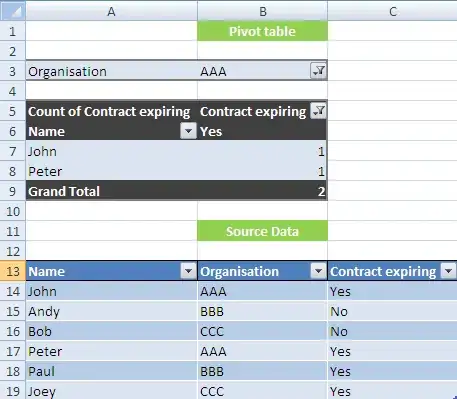

You can check latest python packages installed using this script as glue job

import logging

import pip

logger = logging.getLogger(__name__)

logger.setLevel(logging.INFO)

if __name__ == '__main__':

logger.info(pip._internal.main(['list']))

As of 30-Jun-2020 Glue as has these python packages pre-installed. So numpy and pandas is covered.

awscli 1.16.242

boto3 1.9.203

botocore 1.12.232

certifi 2020.4.5.1

chardet 3.0.4

colorama 0.3.9

docutils 0.15.2

idna 2.8

jmespath 0.9.4

numpy 1.16.2

pandas 0.24.2

pip 20.0.2

pyasn1 0.4.8

PyGreSQL 5.0.6

python-dateutil 2.8.1

pytz 2019.3

PyYAML 5.2

requests 2.22.0

rsa 3.4.2

s3transfer 0.2.1

scikit-learn 0.20.3

scipy 1.2.1

setuptools 45.1.0

six 1.14.0

urllib3 1.25.8

virtualenv 16.7.9

wheel 0.34.2

You can install additional packages in glue-python if they are present in the requirements.txt used to build the attaching .whl. The whl file gets collected and installed before your script is kicked-off. I would also suggest you to look into Sagemaker Processing which is more easier for python based jobs. Unlike serveless instance for glue-python shell, you are not limited to 16gb limit there.

- 1,442

- 13

- 16

I think the current answer is you cannot. According to AWS Glue Documentation:

Only pure Python libraries can be used. Libraries that rely on C extensions, such as the pandas Python Data Analysis Library, are not yet supported.

But even when I try to include a normal python written library in S3, the Glue job failed because of some HDFS permission problem. If you find a way to solve this, please let me know as well.

- 234

- 2

- 9

-

Those are already part of glue nodes, and also if you want to install your own packages there is a way to do it – Suresh May 17 '21 at 11:29

-

2**Obsolete** Glue v 2.0 has a built-in set of libraries, including compiled extensions, including `pandas` – Dima Tisnek Jun 30 '21 at 09:26

If you don't have pure python libraries and still want to use then you can use below script to use it in your Glue code:

import os

import site

from setuptools.command import easy_install

install_path = os.environ['GLUE_INSTALLATION']

easy_install.main( ["--install-dir", install_path, "<library-name>"] )

reload(site)

import <installed library>

- 4,628

- 18

- 36

-

1Where to define GLUE_INSTALLATION? What should be its value? – Sandeep Fatangare Jun 09 '19 at 06:20

-

2Are you using Glue pyspark job or python shell job?It works only for python shell job and GLUE_INSTALLATION value will be read from os environment variables. – Prabhakar Reddy Jun 09 '19 at 06:23

-

We are trying to install psycopg2 library but it is throwing error : Download error on https://pypi.org/simple/: [Errno 99] Cannot assign requested address -- Some packages may not be found! No local packages or working download links found for psycopg2 using python shell job error: Could not find suitable distribution for Requirement.parse('psycopg2') – Sandeep Fatangare Jun 11 '19 at 13:43

-

Never mind, it seems VPC issue. Without VPC, we are able to install pyscopg2 library – Sandeep Fatangare Jun 13 '19 at 13:12

-

@SandeepFatangare were you able to install psycopg2 library in Glue, If yes could you please provide me the needful steps. Thanks. – Nikhil Yadav Jul 23 '19 at 06:34

-

-

@SandeepFatangare `GLUE_INSTALLATION` was not set in my environment, either. I was able to find site-packages directory with `site.getsitepackages()[0]`. Using this value instead worked for me. – Vito Oct 18 '19 at 12:46

-

Can you please provide a clear good working example? Use the smart_open package (or any other package) as an example. – bda Feb 21 '20 at 18:06

There is an update:

...You can now use Python shell jobs... ...Python shell jobs in AWS Glue support scripts that are compatible with Python 2.7 and come pre-loaded with libraries such as the Boto3, NumPy, SciPy, pandas, and others.

https://aws.amazon.com/about-aws/whats-new/2019/01/introducing-python-shell-jobs-in-aws-glue/

- 358

- 4

- 7

-

1Specifically, their supported libraries are here: https://docs.aws.amazon.com/glue/latest/dg/add-job-python.html#python-shell-supported-library – combinatorist Oct 08 '20 at 19:11

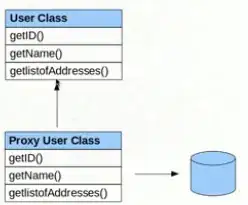

AWS GLUE library/Dependency is little convoluted

there are basically three ways to add required packages

Approach 1

via AAWS console UI/JOB definition, below are few screens to help

Action --> Edit Jobthen scroll all the way down and expand

Security configuration, script libraries, and job parameters (optional)

then add all your packages as .zip files to Python Library path (you need to add your .zip files to S3 then specify the path)

one catch here is you need to make sure your zip file must contain init.py in the root folder

and also, if your package depends on another package then it will be very difficult to add those packages.

Approach 2

programmatically installing your packages (Easy one)

here is the path where you can install the required libraries to

/home/spark/.local/lib/python3.7/site-packages/

**

/home/spark/.local/lib/python3.7/site-packages/

**

here is the example of installing the AWS package I have installed SAGE marker package here

import site

from importlib import reload

from setuptools.command import easy_install

# install_path = site.getsitepackages()[0]

install_path = '/home/spark/.local/lib/python3.7/site-packages/'

easy_install.main( ["--install-dir", install_path, "https://files.pythonhosted.org/packages/60/c7/126ad8e7dfbffaf9a5384ca6123da85db6c7b4b4479440ce88c94d2bb23f/sagemaker-2.3.0.tar.gz"] )

reload(site)

Approach 3. (Suggested and clean)

under Security configuration, script libraries, and job parameters (optional) section to job parameters

add the required libraries with --additional-python-modules parameter

you can specify as may packages as you need with comma separator

happy to help

- 38,717

- 16

- 62

- 66

-

Note that the Approach 2 causes a WARNING: The easy_install command is deprecated and will be removed in a future version. – Mauro Mascia Jan 26 '21 at 14:07

when you click run job you have a button Job parameters (optional) that is collapsed by default , when we click on it we have the following options which we can use to save the libraries in s3 and this works for me :

Python library path

s3://bucket-name/folder-name/file-name

Dependent jars path

s3://bucket-name/folder-name/file-name

Referenced files path s3://bucket-name/folder-name/file-name

- 83

- 1

- 7

-

1I have created a glue job and uploaded the python script, pandas-0.22.0.tar, pandas-0.22.0-cp27-cp27mu-manylinux1_x86_64.whl file. But my job failed with error "import pandas as pd ImportError: No module named pandas" ? Please suggest what other files need to be uploaded, to resolve pandas error. The pandas*.tar file is uploaded to Dependent jars path – Yuva Mar 13 '18 at 18:12

-

According to AWS Glue Documentation: "Only pure Python libraries can be used. Libraries that rely on C extensions, such as the pandas Python Data Analysis Library, are not yet supported." – Daniel I. Cruz Jul 24 '19 at 14:54

The picked answer is not longer true since 2019

awswrangler is what you need.

It allows you to use pandas in glue and lambda

https://github.com/awslabs/aws-data-wrangler

Install using AWS Lambda Layer

https://aws-data-wrangler.readthedocs.io/en/latest/install.html#setting-up-lambda-layer

Example: Typical Pandas ETL

import pandas

import awswrangler as wr

df = pandas.read_... # Read from anywhere

# Typical Pandas, Numpy or Pyarrow transformation HERE!

wr.pandas.to_parquet( # Storing the data and metadata to Data Lake

dataframe=df,

database="database",

path="s3://...",

partition_cols=["col_name"],

)

- 91

- 1

- 5

AWS Glue version 2.0 released on 2020 Aug now has pandas and numpy installed by default. See https://docs.aws.amazon.com/glue/latest/dg/reduced-start-times-spark-etl-jobs.html#reduced-start-times-new-features for detail.

- 3,267

- 2

- 25

- 35

If you go to edit a job (or when you create a new one) there is an optional section that is collapsed called "Script libraries and job parameters (optional)". In there, you can specify an S3 bucket for Python libraries (as well as other things). I haven't tried it out myself for that part yet, but I think that's what you are looking for.

- 316

- 2

- 6

-

If you have a number of modules you want to include, you can archive them into into single ZIP file and attach over "Script libraries and job parameters" parameter. Your modules will be available for a job on the run. – Alexey Bakulin Feb 27 '18 at 08:24

As of now, You can use Python extension modules and libraries with your AWS Glue ETL scripts as long as they are written in pure Python. C libraries such as pandas are not supported at the present time, nor are extensions written in other languages.

- 1,161

- 1

- 15

- 20

Use Glue version 2 instead of version 3 Steps:

- Go to glue job and edit script with below code

code:

import sys

from awsglue.transforms import *

from awsglue.utils import getResolvedOptions

from pyspark.context import SparkContext

from awsglue.context import GlueContext

from awsglue.job import Job

import pandas as pd

args = getResolvedOptions(sys.argv, ['JOB_NAME'])

sc = SparkContext()

glueContext = GlueContext(sc)

spark = glueContext.spark_session

job = Job(glueContext)

job.init(args['JOB_NAME'], args)

excel_path= r"s3://input/employee.xlsx"

df_xl_op = pd.read_excel(excel_path,sheet_name = "Sheet1")

df=df_xl_op.applymap(str)

input_df = spark.createDataFrame(df)

input_df.printSchema()

job.commit()

Save script

Goto Action - Edit Job - Select Glue version2 and set key value under security configuration

key : --additional-python-modules

value : pandas==1.2.4,xlrd==1.2.0,numpy==1.20.1,fsspec==0.7.4Save and run the job

It will resolve your error and you will be able to read the excel file using pandas

- 79

- 5

If you want to integrate python modules into your AWS GLUE ETL job, you can do. You can use whatever Python Module you want.

Because Glue is nothing but serverless with Python run environment. SO all you need is to package the modules that your script requires using pip install -t /path/to/your/directory. And then upload to your s3 bucket.

And while creating AWS Glue job, after pointing s3 scripts, temp location, if you go to advanced job parameters option, you will see python_libraries option there.

You can just point that to python module packages that you uploaded to s3.

-

From your comment, I understand that I will be able to run non Pure Python libraries such as pandas and pymongo in Glue if I package, upload and reference the libraries for the import in my job script? From the docs as mentioned in the accepted answer I understood that I will not be able to run such libraries. Has anyone been able to get it working? – Gerrie van Wyk Jun 09 '18 at 22:33

-

Yes, you can use. You can even write your own python functions or modules that are required for your job and have them reference in your python libraries path. – winnervc Aug 22 '18 at 17:16

-

2No you can't use libraries which are on top of c/c++ code. You can use pure python libraries only. Pandas core code is in C hence can't use in Glue – Sandeep Fatangare Mar 21 '19 at 16:37

In order to install a specific version (for instance, for AWS Glue python job), navigate to the website with python packages, for example to the page of package "pg8000" https://pypi.org/project/pg8000/1.12.5/#files

Then select an appropriate version, copy the link to the file, and paste it into the snippet below:

import os

import site

from setuptools.command import easy_install

install_path = os.environ['GLUE_INSTALLATION']

easy_install.main( ["--install-dir", install_path, "https://files.pythonhosted.org/packages/83/03/10902758730d5cc705c0d1dd47072b6216edc652bc2e63a078b58c0b32e6/pg8000-1.12.5.tar.gz"] )

reload(site)

- 103

- 1

- 7