I have a fairly large test suite for a C++ library with close to 100% line coverage, but only 55.3% branch coverage. Skimming through the results of lcov, it seems as if most of the missed branches can be explained by C++'s many ways to throw std::bad_alloc, e.g. whenever an std::string is constructed.

I was asking myself how to improve branch coverage in this situation and thought that it would be nice to have a new operator that can be configured to throw std::bad_alloc after just as many allocations needed to hit each branch missed in my test suite.

I (naively) tried defining a global void* operator new (std::size_t) function which counts down a global int allowed_allocs and throws std::bad_alloc whenever 0 is reached.

This has several problems though:

- It is hard to get the number of

newcalls until the "first" desiredthrow. I may execute a dry run to calculate the required calls to succeed, but this does not help if several calls can fail in the same line, e.g. something likestd::to_string(some_int) + std::to_string(another_int)where eachstd::to_string, the concatenation viaoperator+and also the initial allocation may fail. - Even worse, my test suite (I am using Catch) uses a lot of

newcalls itself, so even if I knew how many calls my code needs, it is hard to guess how many additional calls of the test suite are necessary. (To make things worse, Catch has several "verbose" modes which create lots of outputs which again need memory...)

Do you have any idea how to improve the branch coverage?

Update 2017-10-07

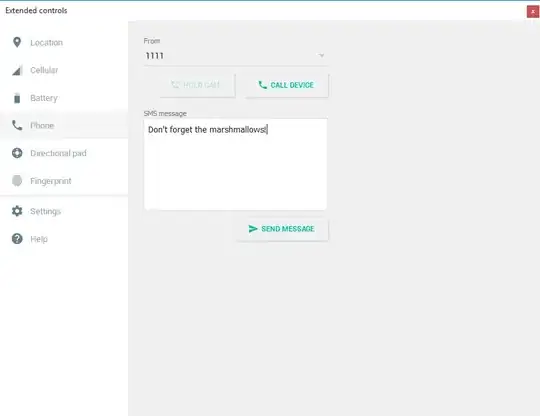

Meanwhile, I found https://stackoverflow.com/a/43726240/266378 with a link to a Python script to filter some of the branches created by exceptions from the lcov output. This brought my branch coverage to 71.5%, but the remaining unhit branches are still very strange. For instance, I have several if statements like this:

with four (?) branches of which one remained unhit (reference_token is a std::string).

Does anyone has an idea what these branches mean and how they can be hit?