I am really new to opencv and a beginner to python.

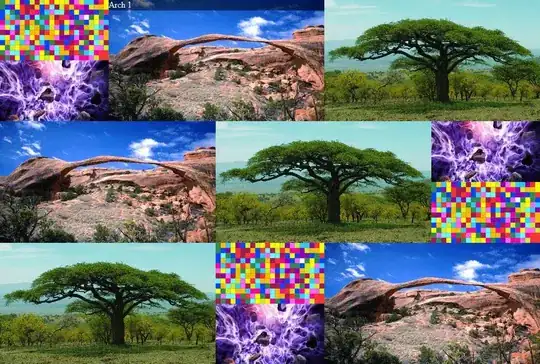

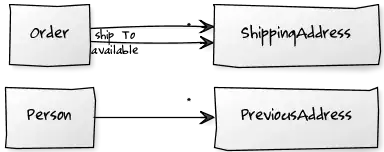

I have this image:

I want to somehow apply proper thresholding to keep nothing but the 6 digits.

The bigger picture is that I intend to try to perform manual OCR to the image for each digit separately, using the k-nearest neighbours algorithm on a per digit level (kNearest.findNearest)

The problem is that I cannot clean up the digits sufficiently, especially the '7' digit which has this blue-ish watermark passing through it.

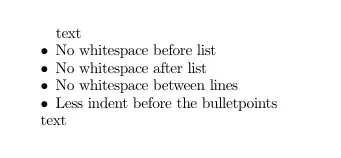

The steps I have tried so far are the following:

I am reading the image from disk

# IMREAD_UNCHANGED is -1

image = cv2.imread(sys.argv[1], cv2.IMREAD_UNCHANGED)

Then I'm keeping only the blue channel to get rid of the blue watermark around digit '7', effectively converting it to a single channel image

image = image[:,:,0]

# openned with -1 which means as is,

# so the blue channel is the first in BGR

Then I'm multiplying it a bit to increase contrast between the digits and the background:

image = cv2.multiply(image, 1.5)

Finally I perform Binary+Otsu thresholding:

_,thressed1 = cv2.threshold(image,0,255,cv2.THRESH_BINARY+cv2.THRESH_OTSU)

As you can see the end result is pretty good except for the digit '7' which has kept a lot of noise.

How to improve the end result? Please supply the image example result where possible, it is better to understand than just code snippets alone.