I was benchmarking a function and I see that some iteration are slower than other.

After some tests I tried to benchmark two contiguous measurements and I still got some weird results.

The code is on wandbox.

For me the important part is :

using clock = std::chrono::steady_clock;

// ...

for (int i = 0; i < statSize; i++)

{

auto t1 = clock::now();

auto t2 = clock::now();

}

The loop is optimized away as we can see on godbolt.

call std::chrono::_V2::steady_clock::now()

mov r12, rax

call std::chrono::_V2::steady_clock::now()

The code was compiled with:

g++ bench.cpp -Wall -Wextra -std=c++11 -O3

and gcc version 6.3.0 20170516 (Debian 6.3.0-18+deb9u1) on an Intel® Xeon® W-2195 Processor.

I was the only user on the machine and I try to run with and without higth priority (nice or chrt) and the result was the same.

The result I got with 100 000 000 iterations was:

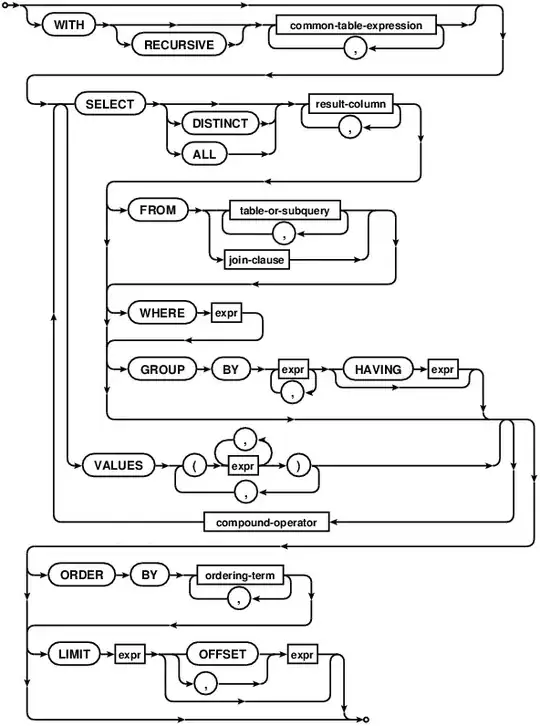

The Y-axis is in nanoseconds, it's the result of the line

std::chrono::duration_cast<std::chrono::nanoseconds>(t2 - t1).count()

These 4 lines make me think of: No cache/L1/L2/L3 cache misses (even if the “L3 cache misses” line seems to be too close of the L2 line)

I am not sure why there will be cache misses, may be the storage of the result, but it’s not in the measured code.

I have try to run 10 000 times the program with a loop of 1500, because the L1 cache of this processor is:

lscpu | grep L1

L1d cache: 32K

L1i cache: 32K

And 1500*16 bits = 24 000 bits which is less than 32K so there shouldn’t have cache miss.

And the results:

I still have my 4 lines (and some noise).

So if it’s realy a cache miss I don’t have any idea why it is happening.

I don’t kown if it’s useful for you but I run:

sudo perf stat -e cache-misses,L1-dcache-load-misses,L1-dcache-load ./a.out 1000

With the value 1 000 / 10 000 / 100 000 / 1 000 000

I got between 4.70 and 4.30% of all L1-dcache hits, which seem pretty decent to me.

So the questions are:

- What is the cause of these slowdown?

- How produce a qualitative benchmark of a function when I can’t have a constant time for a No operation ?

Ps : I don’t kwon if I am missing useful informations / flags, feel free to ask !

How reproduce:

The code:

#include <iostream> #include <chrono> #include <vector> int main(int argc, char **argv) { int statSize = 1000; using clock = std::chrono::steady_clock; if (argc == 2) { statSize = std::atoi(argv[1]); } std::vector<uint16_t> temps; temps.reserve(statSize); for (int i = 0; i < statSize; i++) { auto t1 = clock::now(); auto t2 = clock::now(); temps.push_back( std::chrono::duration_cast<std::chrono::nanoseconds>(t2 - t1).count()); } for (auto t : temps) std::cout << (int)t << std::endl; return (0); }Build:

g++ bench.cpp -Wall -Wextra -std=c++11 -O3Generate output (sudo needed):

In this case I run 10 000 time the program. Each time take 100 measures, and I remove the first which is always about 5 time slower :

for i in {1..10000} ; do sudo nice -n -17 ./a.out 100 | tail -n 99 >> fast_1_000_000_uint16_100 ; doneGenerate graph:

cat fast_1_000_000_uint16_100 | gnuplot -p -e "plot '<cat'"The result I have on my machine:

Where I am after the answer of Zulan and all the comments

The current_clocksource is set on tsc and no switch seen in dmesg, command used:

dmesg -T | grep tsc

I use this script to remove the HyperThreading (HT) then

grep -c proc /proc/cpuinfo

=> 18

Subtract 1 from the last result to obtain the last available core:

=> 17

Edit /etc/grub/default and add isolcpus=(last result) in in GRUB_CMDLINE_LINUX:

GRUB_CMDLINE_LINUX="isolcpus=17"

Finaly:

sudo update-grub

reboot

// reexecute the script

Now I can use:

taskset -c 17 ./a.out XXXX

So I run 10 000 times a loop of 100 iterations.

for i in {1..10000} ; do sudo /usr/bin/time -v taskset -c 17 ./a.out 100 > ./core17/run_$i 2>&1 ; done

Check if there is any Involuntary context switches:

grep -L "Involuntary context switches: 0" result/* | wc -l

=> 0

There is none, good. Let's plot :

for i in {1..10000} ; do cat ./core17/run_$i | head -n 99 >> ./no_switch_taskset ; done

cat no_switch_taskset | gnuplot -p -e "plot '<cat'"

Result :

There are still 22 measures greater than 1000 (when most values are arround 20) that I don't understand.

Next step, TBD

Do the part :

sudo nice -n -17 perf record...

of the Zulan answer's