(My question is related to computer architecture and performance understanding. Did not find a relevant forum, so post it here as a general question.)

I have a C program which accesses memory words that are located X bytes apart in virtual address space. For instance, for (int i=0;<some stop condition>;i+=X){array[i]=4;}.

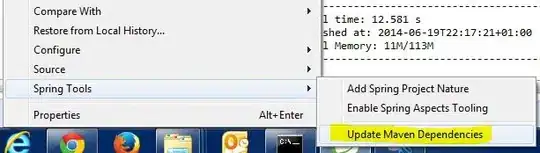

I measure the execution time with a varying value of X. Interestingly, when X is the power of 2 and is about page size, e.g., X=1024,2048,4096,8192..., I get to huge performance slowdown. But on all other values of X, like 1023 and 1025, there is no slowdown. The performance results are attached in the figure below.

I test my program on several personal machines, all are running Linux with x86_64 on Intel CPU.

What could be the cause of this slowdown? We have tried row buffer in DRAM, L3 cache, etc. which do not seem to make sense...

Update (July 11)

We did a little test here by adding NOP instructions to the original code. And the slowdown is still there. This sorta veto the 4k alias. The cause by conflict cache misses is more likely the case here.