I'm training a NN with Pytorch to predict the expected price for the Boston dataset. The network looks like this:

from sklearn.datasets import load_boston

from torch.utils.data.dataset import Dataset

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch

import torch.nn as nn

import torch.optim as optim

class Net(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(13, 128)

self.fc2 = nn.Linear(128, 64)

self.fc3 = nn.Linear(64, 32)

self.fc4 = nn.Linear(32, 16)

self.fc5 = nn.Linear(16,1)

def forward(self, x):

x = self.fc1(x)

x = self.fc2(x)

x = F.relu(x)

x = self.fc3(x)

x = F.relu(x)

x = self.fc4(x)

x = F.relu(x)

return self.fc5(x)

And the data loader:

class BostonData(Dataset):

__xs = []

__ys = []

def __init__(self, train = True):

df = load_boston()

index = int(len(df["data"]) * 0.7)

if train:

self.__xs = df["data"][0:index]

self.__ys = df["target"][0:index]

else:

self.__xs = df["data"][index:]

self.__ys = df["target"][index:]

def __getitem__(self, index):

return self.__xs[index], self.__ys[index]

def __len__(self):

return len(self.__xs)

In my first attempt I didn't add the ReLU units, but after a little bit of research I saw that adding them is a common practice, but It didn't work out for me.

Here is the training code:

dset_train = BostonData(train = True)

dset_test = BostonData(train = False)

train_loader = DataLoader(dset_train, batch_size=30, shuffle=True)

test_loader = DataLoader(dset_train, batch_size=30, shuffle=True)

optimizer = optim.Adam(net.parameters(), lr = 0.001)

criterion = torch.nn.MSELoss()

EPOCHS = 10000

lloss = []

for epoch in range(EPOCHS):

for trainbatch in train_loader:

X,y = trainbatch

net.zero_grad()

output = net(X.float())

loss = criterion(output, y)

loss.backward()

optimizer.step()

lloss.append(loss)

print(loss)

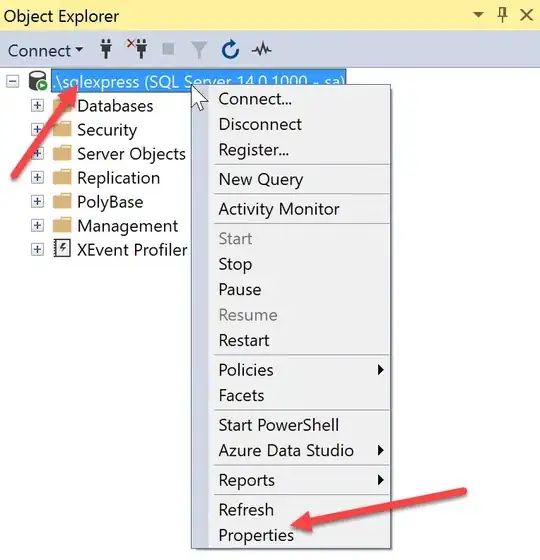

After 10k epochs, the loss graph looks like the following

where I don't see any clear decrease.

I don't know if I'm messing up with the torch.nn.MSELoss(), the optimizer or maybe with the net topology, so any help will be appreciated.

Edit:

Changing the learning rate and normalizing the data didn't work for me. I added the line self.__xs = (self.__xs - self.__xs.mean()) / self.__xs.std()

and a change to lr = 0.01. The loss plot is very similar to the first one.

Same plot for lr = 0.01 and normalizing after 1000 epochs: