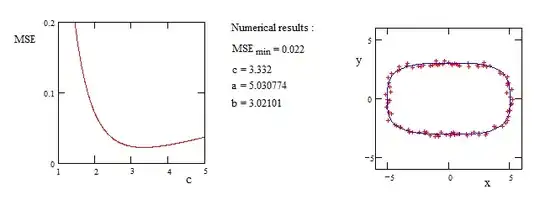

I'm trying to find the best parameters (a, b, and c) of the following function (general formula of circle, ellipse, or rhombus):

(|x|/a)^c + (|y|/b)^c = 1

of two arrays of independent data (x and y) in python. My main objective is to estimate the best value of (a, b, and c) based on my x and y variable. I am using curve_fit function from scipy, so here is my code with a demo x, and y.

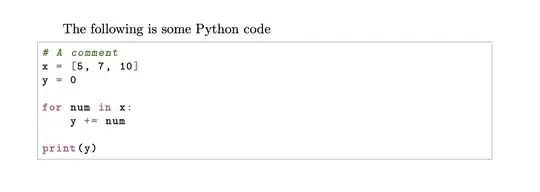

import numpy as np

import matplotlib.pyplot as plt

from scipy.optimize import curve_fit

alpha = 5

beta = 3

N = 500

DIM = 2

np.random.seed(2)

theta = np.random.uniform(0, 2*np.pi, (N,1))

eps_noise = 0.2 * np.random.normal(size=[N,1])

circle = np.hstack([np.cos(theta), np.sin(theta)])

B = np.random.randint(-3, 3, (DIM, DIM))

noisy_ellipse = circle.dot(B) + eps_noise

X = noisy_ellipse[:,0:1]

Y = noisy_ellipse[:,1:]

def func(xdata, a, b,c):

x, y = xdata

return (np.abs(x)/a)**c + (np.abs(y)/b)**c

xdata = np.transpose(np.hstack((X, Y)))

ydata = np.ones((xdata.shape[1],))

pp, pcov = curve_fit(func, xdata, ydata, maxfev = 1000000, bounds=((0, 0, 1), (50, 50, 2)))

plt.scatter(X, Y, label='Data Points')

x_coord = np.linspace(-5,5,300)

y_coord = np.linspace(-5,5,300)

X_coord, Y_coord = np.meshgrid(x_coord, y_coord)

Z_coord = func((X_coord,Y_coord),pp[0],pp[1],pp[2])

plt.contour(X_coord, Y_coord, Z_coord, levels=[1], colors=('g'), linewidths=2)

plt.legend()

plt.xlabel('X')

plt.ylabel('Y')

plt.show()

By using this code, the parameters are [4.69949891, 3.65493859, 1.0] for a, b, and c.

The problem is that I usually get the value of c the smallest in its bound, while in this demo data it (i.e., c parameter) supposes to be very close to 2 as the data represent an ellipse.

Any help and suggestions for solving this issue are appreciated.