I'm trying to read xml file using azure databricks using below code:

df = spark.read.format('com.databricks.spark.xml').options(rowTag='book').load(' /FileStore/tables/sample.xml')

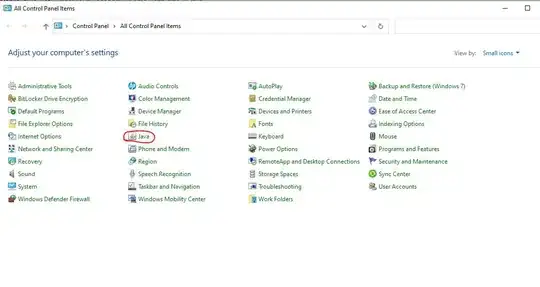

I installed spark-xml:0.1.1-s_2.10 package:

But I'm getting below error:

Py4JJavaError: An error occurred while calling o285.load.

: java.lang.ClassNotFoundException: Failed to find data source: com.databricks.spark.xml. Please find packages at http://spark.apache.org/third-party-projects.html

at org.apache.spark.sql.execution.datasources.DataSource$.lookupDataSource(DataSource.scala:733)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:276)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:214)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)

at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:380)

at py4j.Gateway.invoke(Gateway.java:295)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.GatewayConnection.run(GatewayConnection.java:251)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.ClassNotFoundException: com.databricks.spark.xml.DefaultSource

at java.net.URLClassLoader.findClass(URLClassLoader.java:382)

at java.lang.ClassLoader.loadClass(ClassLoader.java:418)

at java.lang.ClassLoader.loadClass(ClassLoader.java:351)

at org.apache.spark.sql.execution.datasources.DataSource$$anonfun$23$$anonfun$apply$14.apply(DataSource.scala:710)

at org.apache.spark.sql.execution.datasources.DataSource$$anonfun$23$$anonfun$apply$14.apply(DataSource.scala:710)

at scala.util.Try$.apply(Try.scala:192)

at org.apache.spark.sql.execution.datasources.DataSource$$anonfun$23.apply(DataSource.scala:710)

at org.apache.spark.sql.execution.datasources.DataSource$$anonfun$23.apply(DataSource.scala:710)

at scala.util.Try.orElse(Try.scala:84)

at org.apache.spark.sql.execution.datasources.DataSource$.lookupDataSource(DataSource.scala:710)

... 13 more