I have a few questions about interpreting the performance of certain optimizers on MNIST using a Lenet5 network and what does the validation loss/accuracy vs training loss/accuracy graphs tell us exactly. So everything is done in Keras using a standard LeNet5 network and it is ran for 15 epochs with a batch size of 128.

There are two graphs, train acc vs val acc and train loss vs val loss. I made 4 graphs because I ran it twice, once with validation_split = 0.1 and once with validation_data = (x_test, y_test) in model.fit parameters. Specifically the difference is shown here:

train = model.fit(x_train, y_train, epochs=15, batch_size=128, validation_data=(x_test,y_test), verbose=1)

train = model.fit(x_train, y_train, epochs=15, batch_size=128, validation_split=0.1, verbose=1)

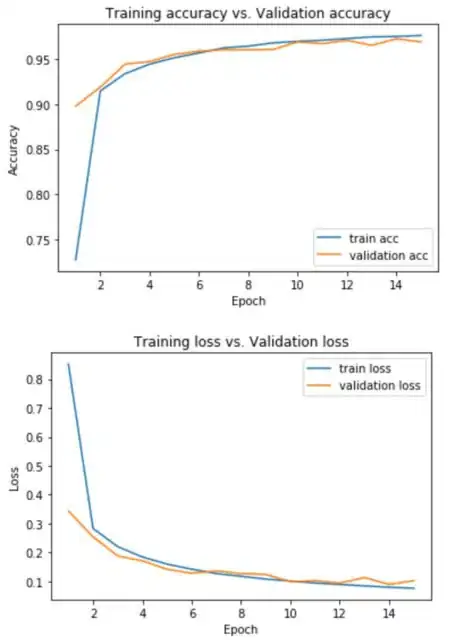

These are the graphs I produced:

using validation_data=(x_test, y_test):

using validation_split=0.1:

So my two questions are:

1.) How do I interpret both the train acc vs val acc and train loss vs val acc graphs? Like what does it tell me exactly and why do different optimizers have different performances (i.e the graphs are different as well).

2.) Why do the graphs change when I use validation_split instead? Which one would be a better choice to use?