I am trying to read a subset of a dataset by using pushdown predicate. My input dataset consists in 1,2TB and 43436 parquet files stored on s3. With the push down predicate I am supposed to read 1/4 of data.

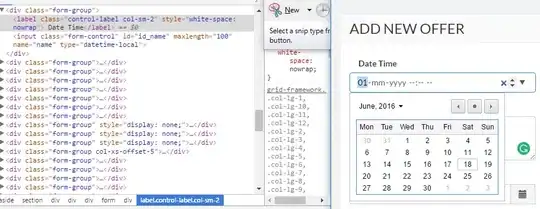

Seeing the Spark UI. I see that the job actually reads 1/4 of data (300GB) but there are still 43436 partitions in the first stage of the job however only 1/4 of these partitions has data, the other 3/4 are empty ones (check the median input data in the attached screenshots).

I was expecting Spark to create partitions only for non empty partitions. I am seeing a 20% performance overhead when reading the whole dataset with the pushdown predicate comparing to reading the prefiltred dataset by another job (1/4 of data) directly. I suspect that this overhead is due to the huge number of empty partitions/tasks I have in my first stage, so I have two questions:

- Are there any workaround to avoid these empty partitions?

- Do you think to any other reason responsible for the overhead? may be the pushdown filter execution is naturally a little bit slow?

Thank you in advance