Note:

If you end up here, you might want to take a look at shaka-player and the accompanying shaka-streamer. Use it. Don't implement this yourself unless you really have to.

I am trying for quite some time now to be able to play an audio track on Chrome, Firefox, Safari, etc. but I keep hitting brick walls. My problem is currently that I am just not able to seek within a fragmented MP4 (or MP3).

At the moment I am converting audio files such as MP3 to fragmented MP4 (fMP4) and send them chunk-wise to the client. What I do is defining a CHUNK_DURACTION_SEC (chunk duration in seconds) and compute a chunk size like this:

chunksTotal = Math.ceil(this.track.duration / CHUNK_DURATION_SEC);

chunkSize = Math.ceil(this.track.fileSize / this.chunksTotal);

With this I partition the audio file and can fetch it entirely jumping chunkSize-many bytes for each chunk:

-----------------------------------------

| chunk 1 | chunk 2 | ... | chunk n |

-----------------------------------------

How audio files are converted to fMP4

ffmpeg -i input.mp3 -acodec aac -b:a 256k -f mp4 \

-movflags faststart+frag_every_frame+empty_moov+default_base_moof \

output.mp4

This seems to work with Chrome and Firefox (so far).

How chunks are appended

After following this example, and realizing that it's simply not working as it is explained here, I threw it away and started over from scratch. Unfortunately without success. It's still not working.

The following code is supposed to play a track from the very beginning to the very end. However, I also need to be able to seek. So far, this is simply not working. Seeking will just stop the audio after the seeking event got triggered.

The code

/* Desired chunk duration in seconds. */

const CHUNK_DURATION_SEC = 20;

const AUDIO_EVENTS = [

'ended',

'error',

'play',

'playing',

'seeking',

'seeked',

'pause',

'timeupdate',

'canplay',

'loadedmetadata',

'loadstart',

'updateend',

];

class ChunksLoader {

/** The total number of chunks for the track. */

public readonly chunksTotal: number;

/** The length of one chunk in bytes */

public readonly chunkSize: number;

/** Keeps track of requested chunks. */

private readonly requested: boolean[];

/** URL of endpoint for fetching audio chunks. */

private readonly url: string;

constructor(

private track: Track,

private sourceBuffer: SourceBuffer,

private logger: NGXLogger,

) {

this.chunksTotal = Math.ceil(this.track.duration / CHUNK_DURATION_SEC);

this.chunkSize = Math.ceil(this.track.fileSize / this.chunksTotal);

this.requested = [];

for (let i = 0; i < this.chunksTotal; i++) {

this.requested[i] = false;

}

this.url = `${environment.apiBaseUrl}/api/tracks/${this.track.id}/play`;

}

/**

* Fetch the first chunk.

*/

public begin() {

this.maybeFetchChunk(0);

}

/**

* Handler for the "timeupdate" event. Checks if the next chunk should be fetched.

*

* @param currentTime

* The current time of the track which is currently played.

*/

public handleOnTimeUpdate(currentTime: number) {

const nextChunkIndex = Math.floor(currentTime / CHUNK_DURATION_SEC) + 1;

const hasAllChunks = this.requested.every(val => !!val);

if (nextChunkIndex === (this.chunksTotal - 1) && hasAllChunks) {

this.logger.debug('Last chunk. Calling mediaSource.endOfStream();');

return;

}

if (this.requested[nextChunkIndex] === true) {

return;

}

if (currentTime < CHUNK_DURATION_SEC * (nextChunkIndex - 1 + 0.25)) {

return;

}

this.maybeFetchChunk(nextChunkIndex);

}

/**

* Fetches the chunk if it hasn't been requested yet. After the request finished, the returned

* chunk gets appended to the SourceBuffer-instance.

*

* @param chunkIndex

* The chunk to fetch.

*/

private maybeFetchChunk(chunkIndex: number) {

const start = chunkIndex * this.chunkSize;

const end = start + this.chunkSize - 1;

if (this.requested[chunkIndex] == true) {

return;

}

this.requested[chunkIndex] = true;

if ((end - start) == 0) {

this.logger.warn('Nothing to fetch.');

return;

}

const totalKb = ((end - start) / 1000).toFixed(2);

this.logger.debug(`Starting to fetch bytes ${start} to ${end} (total ${totalKb} kB). Chunk ${chunkIndex + 1} of ${this.chunksTotal}`);

const xhr = new XMLHttpRequest();

xhr.open('get', this.url);

xhr.setRequestHeader('Authorization', `Bearer ${AuthenticationService.getJwtToken()}`);

xhr.setRequestHeader('Range', 'bytes=' + start + '-' + end);

xhr.responseType = 'arraybuffer';

xhr.onload = () => {

this.logger.debug(`Range ${start} to ${end} fetched`);

this.logger.debug(`Requested size: ${end - start + 1}`);

this.logger.debug(`Fetched size: ${xhr.response.byteLength}`);

this.logger.debug('Appending chunk to SourceBuffer.');

this.sourceBuffer.appendBuffer(xhr.response);

};

xhr.send();

};

}

export enum StreamStatus {

NOT_INITIALIZED,

INITIALIZING,

PLAYING,

SEEKING,

PAUSED,

STOPPED,

ERROR

}

export class PlayerState {

status: StreamStatus = StreamStatus.NOT_INITIALIZED;

}

/**

*

*/

@Injectable({

providedIn: 'root'

})

export class MediaSourcePlayerService {

public track: Track;

private mediaSource: MediaSource;

private sourceBuffer: SourceBuffer;

private audioObj: HTMLAudioElement;

private chunksLoader: ChunksLoader;

private state: PlayerState = new PlayerState();

private state$ = new BehaviorSubject<PlayerState>(this.state);

public stateChange = this.state$.asObservable();

private currentTime$ = new BehaviorSubject<number>(null);

public currentTimeChange = this.currentTime$.asObservable();

constructor(

private httpClient: HttpClient,

private logger: NGXLogger

) {

}

get canPlay() {

const state = this.state$.getValue();

const status = state.status;

return status == StreamStatus.PAUSED;

}

get canPause() {

const state = this.state$.getValue();

const status = state.status;

return status == StreamStatus.PLAYING || status == StreamStatus.SEEKING;

}

public playTrack(track: Track) {

this.logger.debug('playTrack');

this.track = track;

this.startPlayingFrom(0);

}

public play() {

this.logger.debug('play()');

this.audioObj.play().then();

}

public pause() {

this.logger.debug('pause()');

this.audioObj.pause();

}

public stop() {

this.logger.debug('stop()');

this.audioObj.pause();

}

public seek(seconds: number) {

this.logger.debug('seek()');

this.audioObj.currentTime = seconds;

}

private startPlayingFrom(seconds: number) {

this.logger.info(`Start playing from ${seconds.toFixed(2)} seconds`);

this.mediaSource = new MediaSource();

this.mediaSource.addEventListener('sourceopen', this.onSourceOpen);

this.audioObj = document.createElement('audio');

this.addEvents(this.audioObj, AUDIO_EVENTS, this.handleEvent);

this.audioObj.src = URL.createObjectURL(this.mediaSource);

this.audioObj.play().then();

}

private onSourceOpen = () => {

this.logger.debug('onSourceOpen');

this.mediaSource.removeEventListener('sourceopen', this.onSourceOpen);

this.mediaSource.duration = this.track.duration;

this.sourceBuffer = this.mediaSource.addSourceBuffer('audio/mp4; codecs="mp4a.40.2"');

// this.sourceBuffer = this.mediaSource.addSourceBuffer('audio/mpeg');

this.chunksLoader = new ChunksLoader(

this.track,

this.sourceBuffer,

this.logger

);

this.chunksLoader.begin();

};

private handleEvent = (e) => {

const currentTime = this.audioObj.currentTime.toFixed(2);

const totalDuration = this.track.duration.toFixed(2);

this.logger.warn(`MediaSource event: ${e.type} (${currentTime} of ${totalDuration} sec)`);

this.currentTime$.next(this.audioObj.currentTime);

const currentStatus = this.state$.getValue();

switch (e.type) {

case 'playing':

currentStatus.status = StreamStatus.PLAYING;

this.state$.next(currentStatus);

break;

case 'pause':

currentStatus.status = StreamStatus.PAUSED;

this.state$.next(currentStatus);

break;

case 'timeupdate':

this.chunksLoader.handleOnTimeUpdate(this.audioObj.currentTime);

break;

case 'seeking':

currentStatus.status = StreamStatus.SEEKING;

this.state$.next(currentStatus);

if (this.mediaSource.readyState == 'open') {

this.sourceBuffer.abort();

}

this.chunksLoader.handleOnTimeUpdate(this.audioObj.currentTime);

break;

}

};

private addEvents(obj, events, handler) {

events.forEach(event => obj.addEventListener(event, handler));

}

}

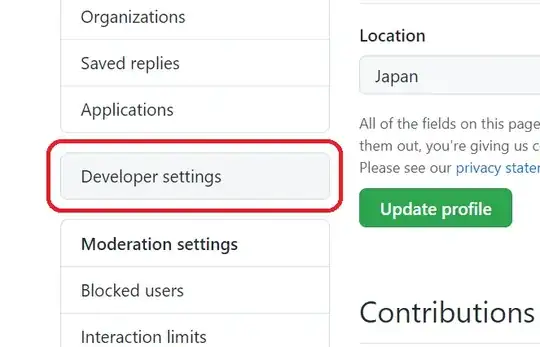

Running it will give me the following output:

Apologies for the screenshot but it's not possible to just copy the output without all the stack traces in Chrome.

What I also tried was following this example and call sourceBuffer.abort() but that didn't work. It looks more like a hack that used to work years ago but it's still referenced in the docs (see "Example" -> "You can see something similar in action in Nick Desaulnier's bufferWhenNeeded demo ..").

case 'seeking':

currentStatus.status = StreamStatus.SEEKING;

this.state$.next(currentStatus);

if (this.mediaSource.readyState === 'open') {

this.sourceBuffer.abort();

}

break;

Trying with MP3

I have tested the above code under Chrome by converting tracks to MP3:

ffmpeg -i input.mp3 -acodec aac -b:a 256k -f mp3 output.mp3

and creating a SourceBuffer using audio/mpeg as type:

this.mediaSource.addSourceBuffer('audio/mpeg')

I have the same problem when seeking.

The issue wihout seeking

The above code has another issue:

After two minutes of playing, the audio playback starts to stutter and comes to a halt prematurely. So, the audio plays up to a point and then it stops without any obvious reason.

For whatever reason there is another canplay and playing event. A few seconds after, the audio simply stops..