TLDR; your X_train can be looked at as (batch, spatial dims..., channels). A kernel applies to the spatial dimensions for all channels in parallel. So a 2D CNN, would require two spatial dimensions (batch, dim 1, dim 2, channels).

So for (100,100,3) shaped images, you will need a 2D CNN that convolves over 100 height and 100 width, over all the 3 channels.

Lets, understand the above statement.

First, you need to understand what CNN (in general) is doing.

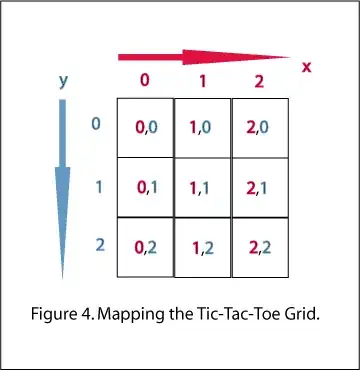

A kernel is convolving through the spatial dimensions of a tensor across its feature maps/channels while performing a simple matrix operation (like dot product) to the corresponding values.

Kernel moves over the spatial dimensions

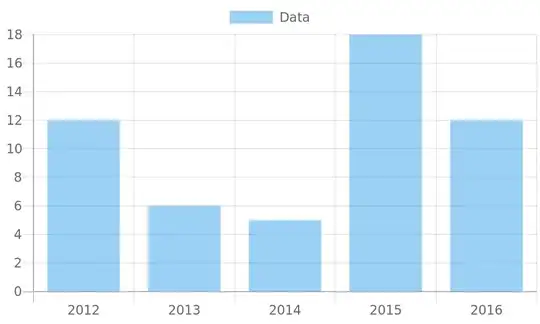

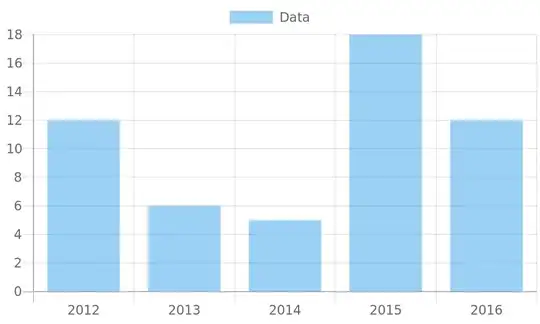

Now, Let's say you have 100 images (called batches). Each image is 28 by 28 pixels and has 3 channels R, G, B (which are also called feature maps in context to CNNs). If I were to store this data as a tensor, the shape would be (100,28,28,3).

However, I could just have an image that doesn't have any height (may like a signal) OR, I could have data that has an extra spatial dimension such as a video (height, width, and time).

In general, here is how the input for a CNN-based neural network looks like.

Same kernel, all channels

The second key point you need to know is, A 2D kernel will convolve over 2 spatial dimensions BUT the same kernel will do this over all the feature maps/channels. So, if I have a (3,3) kernel. This same kernel will get applied over R, G, B channels (in parallel) and move over the Height and Width of the image.

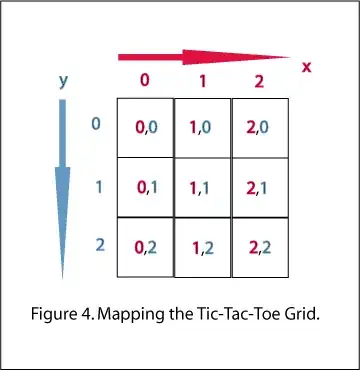

Operation is a dot product

Finally, the operation (for a single feature map/channel and single convolution window) can be visualized like below.

Therefore, in short -

- A kernel gets applied to the spatial dimensions of the data

- A kernel shape is equal to the # of spatial dimensions

- A kernel applies over all the feature maps/channels at once

- The operation is a simple dot product between the kernel and window

Let's take the example of tensors with single feature maps/channels (so, for an image, it would be greyscaled) -

So, with that intuition, we see that if I want to use a 1D CNN, your data must have 1 spatial dimension, which means each sample needs to be 2D (spatial dimension and channels), which means the X_train must be a 3D tensor (batch, spatial dimensions, channels).

Similarly, for a 2D CNN, you would have 2 spatial dimensions (H, W for example) and would be 3D samples (H, W, Channels) and X_train would be (Samples, H, W, Channels)

Let's try this with code -

import tensorflow as tf

from tensorflow.keras import layers

X_2D = tf.random.normal((100,7,3)) #Samples, width/time, channels (feature maps)

X_3D = tf.random.normal((100,5,7,3)) #Samples, height, width, channels (feature maps)

X_4D = tf.random.normal((100,6,6,2,3)) #Samples, height, width, time, channels (feature maps)

For applying 1D CNN -

#With padding = same, the edge pixels are padded to not skip a few

#Out featuremaps = 10, kernel (3,)

cnn1d = layers.Conv1D(10, 3, padding='same')(X_2D)

print(X_2D.shape,'->',cnn1d.shape)

#(100, 7, 3) -> (100, 7, 10)

For applying 2D CNN -

#Out featuremaps = 10, kernel (3,3)

cnn2d = layers.Conv2D(10, (3,3), padding='same')(X_3D)

print(X_3D.shape,'->',cnn2d.shape)

#(100, 5, 7, 3) -> (100, 5, 7, 10)

For 3D CNN -

#Out featuremaps = 10, kernel (3,3)

cnn3d = layers.Conv3D(10, (3,3,2), padding='same')(X_4D)

print(X_4D.shape,'->',cnn3d.shape)

#(100, 6, 6, 2, 3) -> (100, 6, 6, 2, 10)