Does SQLite look like a smart choice over CSV in this case ?

I'd suggest yes. Mainly because you would probably want to do something with the data other than spend the rest of your life looking through it.

Perhaps you want some sort of aggregated stats (a summary. averages, maximum value, minimum values perhaps to compare periods). SQLite can make that pretty easy and pretty efficient.

The instrument will be running 24/7 for years, so I guess I'll have to split the database into chunks (e.g. monthly) to keep the file reasonably small and for archiving. I wonder how easy that would be. Can it be automated with a cron job ?

Cron no need, utilise the power of SQLite, a TRIGGER could be handly.

Here's an example that shows a little of what you could do.

As you have 2 distinct sets of readings physical (table) and housekeeping (table) the example has a table per each.

- the physical table has 1 column for the timestamp of the reading and 4 columns for the readings.

- the housekeeping table has 1 column for the timestamp and 10 reading columns.

The example automatically generates data just_to_load some data to show results. The example has such a table that is used to control how much data is inserted, it has 1 row with 1 value (although it could have more rows) and this value is extracted to determine how much data is added.

- with the value as 1000 1000 physical readings will be added for every 5 minutes (about 3.5 days worth of data).

- with the value of 1000 then 300,000 rows will be added to the housekeeping table. i.e every 5 minutes 300 rows will be added.

The example demonstrates automated (TRIGGER) based tidying up (doesn't backup the data but will clear data from both the tables (just an example showing that you can do things automatically)). The TRIGGER is named auto_tidyup.

To know that the TRIGGER is being activated it additionally records the start and end of the TRIGGER's processing (what it does when activated and its WHEN clause condition is met (to reduce the times that it tries to do something)). This data is stored in another table namely tidyup_log.

- The TRIGGER has been set so the WHEN clause is triggered (this would be changed after tested to a suitable schedule).

So in summary 4 tables (1 for testing purposes only) and 1 trigger.

When the data is loaded, the data is then used by 3 queries to extract useful data (well sort of).

The Example SQL (note that perhaps the most complicated SQL is for loading the testing data) :-

DROP TABLE IF EXISTS physical;

DROP TABLE IF EXISTS housekeeping;

DROP TRIGGER IF EXISTS auto_tidyup;

DROP TABLE IF EXISTS tidyup_log;

DROP TABLE IF EXISTS just_for_load;

CREATE TABLE IF NOT EXISTS physical(timestamp INTEGER PRIMARY KEY, fld1 REAL, fld2 REAL, fld3 REAL, fld4 REAL);

CREATE TABLE IF NOT EXISTS housekeeping(timestamp INTEGER PRIMARY KEY, prm1 REAL, prm2 REAL, prm3 REAL, prm4 REAL, prm5 REAL, prm6 REAL, prm7 REAL, prm8 REAL, prm9 REAL, prm10 REAL);

CREATE TABLE IF NOT EXISTS tidyup_log (timestamp INTEGER, action_performed TEXT);

CREATE TRIGGER IF NOT EXISTS auto_tidyup AFTER INSERT ON physical

WHEN CAST(strftime('%d','now') AS INTEGER) = 23 /* <<<<<<<<<< TESTING SO GET HITS >>>>>>>>>>*/

/*WHEN CAST(strftime('%d','now') AS INTEGER = 1 */ /* IF TODAY FIRST DAY OF MONTH */

BEGIN

INSERT INTO tidyup_log VALUES (strftime('%s','now'),'TIDY Started');

DELETE FROM physical WHERE timestamp < new.timestamp - (60 * 60 * 24 * 365 /*approx a year */);

DELETE FROM housekeeping WHERE timestamp < new.timestamp - (60 * 60 * 24 * 365);

INSERT INTO tidyup_log VALUES (strftime('%s','now'),'TIDY ENDED');

END

;

/* ONLY FOR LOADING Test Data controls number of rows added */

CREATE TABLE IF NOT EXISTS just_for_load (base_count INTEGER);

INSERT INTO just_for_load VALUES(1000); /* Number of physical rows to add 5 minutes e.g. 1000 is close to 3.5 days*/

WITH RECURSIVE counter(i) AS

(SELECT 1 UNION ALL SELECT i+1 FROM counter WHERE i < (SELECT sum(base_count) FROM just_for_load))

INSERT INTO physical SELECT strftime('%s','now','+'||(i * 5)||' minutes'), random(),random(),random(),random()FROM counter

;

WITH RECURSIVE counter(i) AS

(SELECT 1 UNION ALL SELECT i+1 FROM counter WHERE i < (SELECT (sum(base_count) * 300) FROM just_for_load))

INSERT INTO housekeeping SELECT strftime('%s','now','+'||(i)||' second'), random(),random(),random(),random(), random(),random(),random(),random(), random(),random()FROM counter

;

/* <<<<<<<<<< DATA LOADED SO EXTRACT IT >>>>>>>>> */

SELECT datetime(timestamp,'unixepoch'), fld1,fld2,fld3,fld4 FROM physical;

/* First query to basically show the 5 minute intervals (and lots of random values)*/

/* This query gets the sum and average of the 10 readings over a 5 minute window */

SELECT

'From '||datetime(min(timestamp),'unixepoch')||' To '||datetime(max(timestamp),'unixepoch') AS Range,

sum(prm1)AS avgP1, avg(prm1) AS sumP1,

sum(prm2)AS avgP2, avg(prm2) AS sumP2,

sum(prm3)AS avgP3, avg(prm3) AS sumP3,

sum(prm4)AS avgP4, avg(prm4) AS sumP4,

sum(prm5)AS avgP5, avg(prm5) AS sumP5,

sum(prm6)AS avgP6, avg(prm6) AS sumP6,

sum(prm7)AS avgP7, avg(prm7) AS sumP7,

sum(prm8)AS avgP8, avg(prm8) AS sumP8,

sum(prm9)AS avgP9, avg(prm9) AS sumP9,

sum(prm10)AS avgP10, avg(prm10) AS sumP10

FROM housekeeping GROUP BY timestamp / 300

;

/* This query shows that the TRIGGER is being activated (even though it does no deletions) */

SELECT * FROM tidyup_log;

/* Tidy up the Testing environment */

DROP TABLE IF EXISTS physical;

DROP TABLE IF EXISTS housekeeping;

DROP TRIGGER IF EXISTS auto_tidyup;

DROP TABLE IF EXISTS tidyup_log;

DROP TABLE IF EXISTS just_for_load;

- The comments should explain quite a bit.

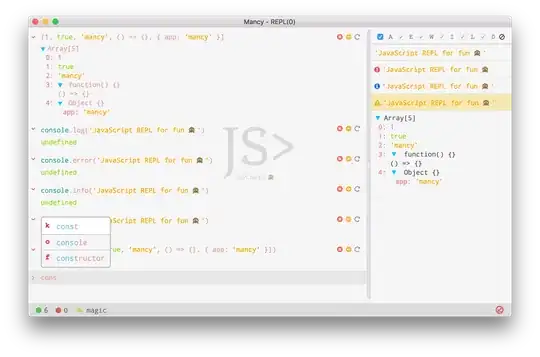

- you may wish to look at:

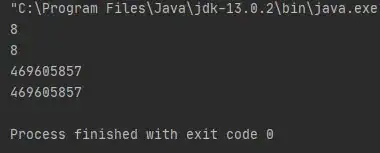

Results

- Extract from the physical table (showing 5 minute intervals of the data aka data you probably don't want to look at)

- Extract more useful data averages and sums of each of the 10 readings every 5 minutes

- 1001 rows because rows don't end start on a 5 minute boundary

- The tidyup log (to show the TRIGGER is being activated)

- start and end for each physical row (noting that the WHEN criteria has been set to trigger on all) and hence 2000 rows

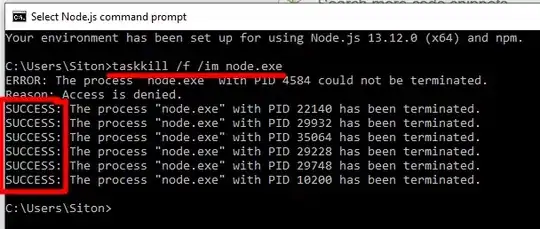

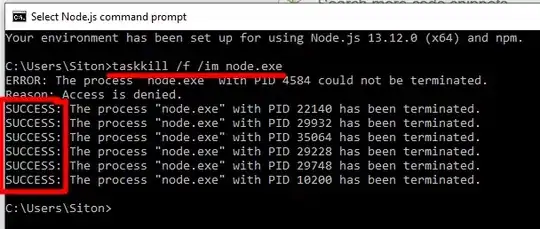

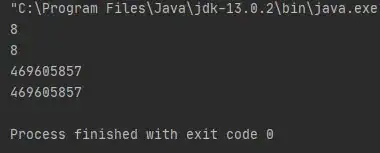

Lastly just to show 300000 rows part of the message log :-

WITH RECURSIVE counter(i) AS

(SELECT 1 UNION ALL SELECT i+1 FROM counter WHERE i < (SELECT (sum(base_count) * 300) FROM just_for_load))

INSERT INTO housekeeping SELECT strftime('%s','now','+'||(i)||' second'), random(),random(),random(),random(), random(),random(),random(),random(), random(),random()FROM counter

> Affected rows: 300000

> Time: 1.207s