I'm trying to build a chunked file uploading mechanism using modern Swift Concurrency.

There is a streamed file reader which I'm using to read files chunk by chunk of 1mb size.

It has two closures nextChunk: (DataChunk) -> Void and completion: () - Void. The first one gets called as many times as there is data read from InputStream of a chunk size.

In order to make this reader compliant to Swift Concurrency I made the extension and created AsyncStream

which seems to be the most suitable for such a case.

public extension StreamedFileReader {

func read() -> AsyncStream<DataChunk> {

AsyncStream { continuation in

self.read(nextChunk: { chunk in

continuation.yield(chunk)

}, completion: {

continuation.finish()

})

}

}

}

Using this AsyncStream I read some file iteratively and make network calls like this:

func process(_ url: URL) async {

// ...

do {

for await chunk in reader.read() {

let request = // ...

_ = try await service.upload(data: chunk.data, request: request)

}

} catch let error {

reader.cancelReading()

print(error)

}

}

The issue there is that there is no any limiting mechanism I'm aware of that won't allow to execute more than N network calls. Thus when I'm trying to upload huge file (5Gb) memory consumption grows drastically. Because of that the idea of streamed reading of file makes no sense as it'd be easier to read the entire file into the memory (it's a joke but looks like that).

In contrast, if I'm using a good old GCD everything works like a charm:

func process(_ url: URL) {

let semaphore = DispatchSemaphore(value: 5) // limit to no more than 5 requests at a given time

let uploadGroup = DispatchGroup()

let uploadQueue = DispatchQueue.global(qos: .userInitiated)

uploadQueue.async(group: uploadGroup) {

// ...

reader.read(nextChunk: { chunk in

let requset = // ...

uploadGroup.enter()

semaphore.wait()

service.upload(chunk: chunk, request: requset) {

uploadGroup.leave()

semaphore.signal()

}

}, completion: { _ in

print("read completed")

})

}

}

Well it is not exactly the same behavior as it uses a concurrent DispatchQueue when AsyncStream runs sequentially.

So I did a little research and found out that probably TaskGroup is what I need in this case. It allows to run async tasks in parallel etc.

I tried it this way:

func process(_ url: URL) async {

// ...

do {

let totalParts = try await withThrowingTaskGroup(of: Void.self) { [service] group -> Int in

var counter = 1

for await chunk in reader.read() {

let request = // ...

group.addTask {

_ = try await service.upload(data: chunk.data, request: request)

}

counter = chunk.index

}

return counter

}

} catch let error {

reader.cancelReading()

print(error)

}

}

In that case memory consumption is even more that in example with AsyncStream iterating!

I suspect that there should be some conditions on which I need to suspend group or task or something and

call group.addTask only when it is possible to really handle these tasks I'm going to add but I have no idea how to do it.

I found this Q/A

And tried to put try await group.next() for each 5th chunk but it didn't help me at all.

Is there any mechanism similar to DispatchGroup + DispatchSemaphore but for modern concurrency?

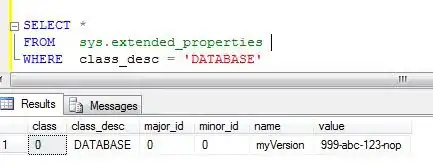

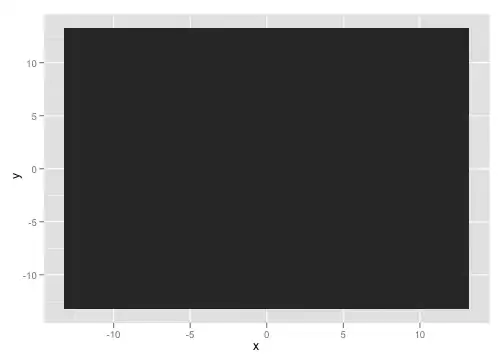

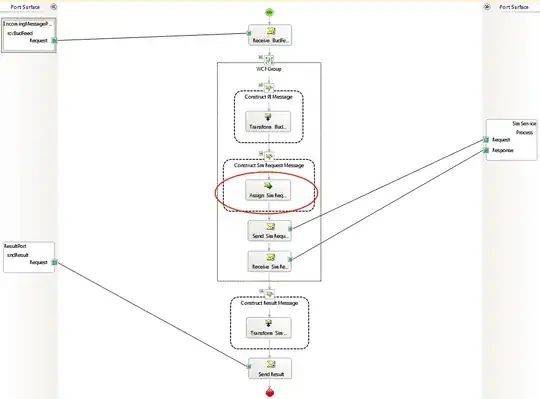

UPDATE: In order to better demonstrate the difference between all 3 ways here are screenshots of memory report