I forget off-hand how the data cache works on the cortex-m7/armv7-m. I want to remember it does not have an MMU and caching is based on address. ARM and ST would be smart enough to know to put cached and non-cached access to sram from the processor core.

If you are wanting to send or receive data using DMA you do not go through the cache.

You linked a question from before which I had provided an answer.

Caches contain some amount of sram as we tend to see a spec for this many KBytes or this many MBytes, whatever. But there are also tag rams and other infrastructure. How does the cache know if there is a hit or a miss. Not from the data, but from other bits of information. Taken from the address of the transaction. Some number of bits of that address are taken and compared to however many "ways" you have so there may be 8 ways for example so there are 8 small memories think of them as arrays of structures in C. In that structure is some information is this cache line valid? If valid what is the tag or bit of address that it is tied to, is it clean/dirty.

Clean or dirty meaning the overall caching infrastructure will be designed (kinda the whole point) to hold information in a faster sram (sram in mcus is very fast already so why a cache in the first place?), which means that write transactions, if they go through the cache (they should in some form) will get written to the cache, and then based on design/policy will get written out into system memory or at least get written on the memory side of the cache. While the cache contains information that has been written that is not also in system memory (due to a write) that is dirty. And when you clean the cache using ARM's term clean, or flush is another term, etc. You go through all of the cache and look for items that are valid and dirty and you initiate writes to system memory to clean them. This is how you force things out the cache into system memory for coherency reasons, if you have a need to do that.

Invalidate a cache simply means you go through the tag rams and you change the valid bit to indicate invalid for that cache line. Basically that "loses" all information about that cache line it is now available to use. It will not result in any hits and it will not do a write to the system for a clean/flush. The actual cache line in the cache memory does not have to be zeroed or put in any other state. Technically just the valid/invalid bit or bits.

How things generally get into a cache are certainly from reads. Depending on the design and settings if a read is cacheable then the cache will first look to see if it has a tag for that item and if it is valid, if so then it simply takes the information in the cache and returns it. If there is a miss, that data does not have a copy in the cache, then it initiates one or more cache line reads from the system side. So a single byte read can/will cause a larger, sometimes much larger, read to happen on the system side, the transaction is held until that (much larger) data (read) returns and then it is put in the cache and the item requested is returned to the processor.

Depending on the architecture and settings, writes may or may not create an entry in the cache, if a (cacheable) write happens and there are no hits in the cache then it may just go straight to the system side as a write of that size and shape. As if the cache was not there. If there is a cache hit then it will go into the cache, and the that/those cache lines are marked as dirty and then depending on the design, etc it may be written to system memory as a side effect of the write from the processor side, the processor will be freed to continue execution but the cache and other logic (write buffer) may continue to process this transaction moving this new data to the system side essentially cleaning/flushing automatically. One normally does not expect this as it takes away performance that the cache was there to provide in the first place.

In any case if it is determined that a transaction has a miss and it is to be cached, then based on that tag, the ways have already been examined to determine if there was a hit. One of the ways will be chosen to hold this new cache line. How that is determined is based on design and in some cases programmable settings. Hopefully if there are any that are invalid then it would go to one of those. But round robin, randomizer, oldest first, etc are solutions you may see. And if there is dirty data in that space then it has to get written out first, making room for the new information. So, absolutely a single byte or single word read (since they have the same performance in a system like this) can require a cache flush of a cache line, then a read from the system and then the result is returned, more clock cycles than if the cache was not there. Nature of the beast. Caches are not perfect, with the right information and experience you can easily write code that makes the cache degrade the performance of the application.

Clean means if a cache line is valid and dirty then write it out to system memory and mark it as clean.

Invalidate means if the cache line is valid then mark it as valid. If it was valid and dirty that information is lost.

In your case you do not want to deal with cache at all for these transactions, the cache in question is in the arm core so nobody but the arm core has access to that cache, nobody else is behind the cache, they are all on the system end.

Taking a quick look at the ARM ARM for armv7-m they do use address space to determine write through and cached or not. One then needs to look at the cortex-m7 TRM for further information and then, particularly in this case, since it is a chip thing not an arm thing anyway, the whole system. The arm processor is just some bit of IP that st bought to glue into a chip with a bunch of other IP and IP of their own that is glued together. Like the engine in the car, the engine manufacturer can't answer questions about the rear differential nor the transmission, that is the car company not the engine company.

arm knows what they are doing

st knows what they are doing

if a chip company makes a chip with dma but the only path between the processor and the memory shared with the dma engine is through the processor's cache when the cache is enabled, and clean/flush and invalidate of address ranges are constantly required to use that dma engine... then you need to immediately discard that chip, blacklist that company's products (if this product is that poorly designed then assume all of their products are), and find a better company to buy products from.

I can't imagine that is the case here, so

Initialize the peripheral, choosing to use DMA and configure the peripheral or dma engine or both (for each direction).

Start the peripheral (this might be part of 4)

write the tx data to the configured address space for dma

tell the peripheral to start the transfer

monitor for completion of transfer

read the received data from the configured address space for dma

That is generic but that is what you are looking for, caches are not involved. For a part/family like this there should be countless examples including the (choose your name for the quality) one or more library solutions and examples that come from the chip vendor. Look at how they others are using the part, compare that to the documentation, determine your risk level for their solution and use it or modify it or learn from it if nothing else.

I know that st products do not have an instruction cache they do their own thing, or at least that is what I remember (some trademarked name for a flash cache, on most of them you cannot turn it off). Does that mean they have not implemented a data cache on the products either? Possible. Just because the architecture for an ip product has a feature (fpu, caches, ...) does not automatically mean that the chip vendor has enabled/implemented those.

Depending on the IP there are various ways to do that as some IP does not have a compile time option for the chip vendor to not compile in a feature. If nothing else the chip vendor could simply stub out the cache memory interfaces and write a few lines of text in the docs that there is no cache, and you can write control registers and see things appear to enable that feature but it simply does not work. One expects that arm provides compile time features, that are not in the public documentation we can see, but are available to the chip vendor in some form.

Sometimes when you buy the IP you are given a menu if you will like ordering a custom burger at a fancy burger shop, a list of checkboxes, mayo, mustard, pickle. ... fpu, cache, 16 bit fetch, 32 bit fetch, one cycle multiply, x cycle multiply, divide, etc. And the chip vendor then produces your custom burger. Or some vendors you get the whole burger then you have to pick off the pickles and onions yourself.

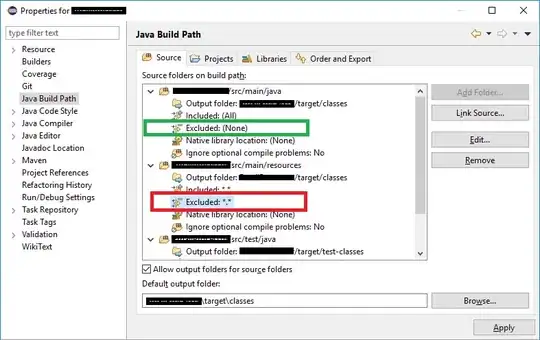

Firstly, find out if this part even have a dcache? Look between the arm arm, the arm trm and the documentation for the chip address spaces (as well as the countless examples) and determine what address space or whet settings, etc are needed to access portions of sram in a non-cached way. If it has a data cache feature at all.

As you can see, DMA1 can access sram1, 2 and 3. I'm using sram2.

As you can see, DMA1 can access sram1, 2 and 3. I'm using sram2.