Suppose I have an image that I want to warp as part of a forward model of a system. In the reverse model, I need to be able to undo the warp. Consider the following:

import numpy as np

from scipy.ndimage import map_coordinates

from matplotlib import pyplot as plt

def make_rotation_matrix(abg, radians=False):

ABG = np.zeros(3)

ABG[:len(abg)] = abg

abg = ABG

if not radians:

abg = np.radians(abg)

alpha, beta, gamma = abg

cos1 = np.cos(alpha)

cos2 = np.cos(beta)

cos3 = np.cos(gamma)

sin1 = np.sin(alpha)

sin2 = np.sin(beta)

sin3 = np.sin(gamma)

Rx = np.asarray([

[1, 0, 0 ], # NOQA

[0, cos1, -sin1],

[0, sin1, cos1]

])

Ry = np.asarray([

[cos2, 0, sin2],

[ 0, 1, 0], # NOQA

[-sin2, 0, cos2],

])

Rz = np.asarray([

[cos3, -sin3, 0],

[sin3, cos3, 0],

[0, 0, 1],

])

m = Rz@Ry@Rx

return m

# draw a square in an image

sfe = np.zeros((128,128), dtype=float)

c=64

w=32

sfe[c-w:c+w,c-w:c+w] = 1

# compute the coordinates, translate to the origin, rotate, translate back

xin = np.arange(128)

yin = np.arange(128)

xin, yin = np.meshgrid(xin,yin)

rot = make_rotation_matrix((0,45,0))

ox, oy = 127/2, 127/2

tin = np.eye(4)

tin[0,-1] = -ox

tin[1,-1] = -oy

tout = np.eye(4)

tout[0,-1] = ox

tout[1,-1] = oy

rot2 = np.zeros((4,4), dtype=float)

rot2[:3,:3] = rot

rot2[-1,-1] = 1

M = tout@(rot2@tin)

Mi = np.linalg.inv(M)

points = np.zeros((xin.size, 4), dtype=float)

points[:,0] = xin.ravel()

points[:,1] = yin.ravel()

points[:,2] = 0 # z=0

points[:,3] = 1 # lambda coordinate for homography

out = np.dot(Mi, points.T)

xout = out[0].reshape(xin.shape)

yout = out[1].reshape(yin.shape)

zout = out[2].reshape(xin.shape)

hout = out[3].reshape(xin.shape)

# do I need to do something differently here?

points2 = points.copy()

out2 = np.dot(M, points2.T)

xout2 = out2[0].reshape(xin.shape)

yout2 = out2[1].reshape(yin.shape)

zout2 = out2[2].reshape(xin.shape)

hout2 = out2[3].reshape(xin.shape)

mapped = map_coordinates(sfe, (yout,xout))

unmapped = map_coordinates(mapped, (yout2,xout2))

neighbors = np.hstack((sfe, mapped, unmapped))

plt.imshow(neighbors)

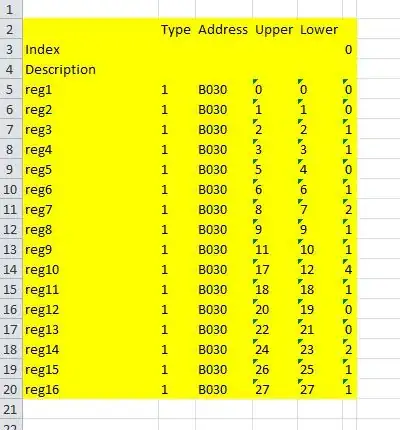

If I perform a clocking rotation instead of an out of plane rotation, I get the behavior I expect:

I understand that by construction I am assuming the image is a birds-eye view or a planar homography, which is OK. What am I missing? Some google related to image warping finds cryptic matlab answers, but I do not understand what the "spatial referencing" is doing.

Edit: An example homography whose inverse does not actually undo the transformation with map_coordinates:

H = np.array([[ 0.063, -0.011, 0.761],

[ 0.011, 0.063, -0.639],

[-0. , -0. , 0.063]])

Simply plotting a square with plot.scatter, it does exactly invert.