How to drop rows of Pandas DataFrame whose value in a certain column is NaN

This is an old question which has been beaten to death but I do believe there is some more useful information to be surfaced on this thread. Read on if you're looking for the answer to any of the following questions:

- Can I drop rows if any of its values have NaNs? What about if all of them are NaN?

- Can I only look at NaNs in specific columns when dropping rows?

- Can I drop rows with a specific count of NaN values?

- How do I drop columns instead of rows?

- I tried all of the options above but my DataFrame just won't update!

It's already been said that df.dropna is the canonical method to drop NaNs from DataFrames, but there's nothing like a few visual cues to help along the way.

# Setup

df = pd.DataFrame({

'A': [np.nan, 2, 3, 4],

'B': [np.nan, np.nan, 2, 3],

'C': [np.nan]*3 + [3]})

df

A B C

0 NaN NaN NaN

1 2.0 NaN NaN

2 3.0 2.0 NaN

3 4.0 3.0 3.0

Below is a detail of the most important arguments and how they work, arranged in an FAQ format.

Can I drop rows if any of its values have NaNs? What about if all of them are NaN?

This is where the how=... argument comes in handy. It can be one of

'any' (default) - drops rows if at least one column has NaN'all' - drops rows only if all of its columns have NaNs

<!_ ->

# Removes all but the last row since there are no NaNs

df.dropna()

A B C

3 4.0 3.0 3.0

# Removes the first row only

df.dropna(how='all')

A B C

1 2.0 NaN NaN

2 3.0 2.0 NaN

3 4.0 3.0 3.0

Note

If you just want to see which rows are null (IOW, if you want a

boolean mask of rows), use

isna:

df.isna()

A B C

0 True True True

1 False True True

2 False False True

3 False False False

df.isna().any(axis=1)

0 True

1 True

2 True

3 False

dtype: bool

To get the inversion of this result, use

notna

instead.

Can I only look at NaNs in specific columns when dropping rows?

This is a use case for the subset=[...] argument.

Specify a list of columns (or indexes with axis=1) to tells pandas you only want to look at these columns (or rows with axis=1) when dropping rows (or columns with axis=1.

# Drop all rows with NaNs in A

df.dropna(subset=['A'])

A B C

1 2.0 NaN NaN

2 3.0 2.0 NaN

3 4.0 3.0 3.0

# Drop all rows with NaNs in A OR B

df.dropna(subset=['A', 'B'])

A B C

2 3.0 2.0 NaN

3 4.0 3.0 3.0

Can I drop rows with a specific count of NaN values?

This is a use case for the thresh=... argument. Specify the minimum number of NON-NULL values as an integer.

df.dropna(thresh=1)

A B C

1 2.0 NaN NaN

2 3.0 2.0 NaN

3 4.0 3.0 3.0

df.dropna(thresh=2)

A B C

2 3.0 2.0 NaN

3 4.0 3.0 3.0

df.dropna(thresh=3)

A B C

3 4.0 3.0 3.0

The thing to note here is you need to specify how many NON-NULL values you want to keep, rather than how many NULL values you want to drop. This is a pain point for new users.

Luckily the fix is easy: if you have a count of NULL values, simply subtract it from the column size to get the correct thresh argument for the function.

required_min_null_values_to_drop = 2 # drop rows with at least 2 NaN

df.dropna(thresh=df.shape[1] - required_min_null_values_to_drop + 1)

A B C

2 3.0 2.0 NaN

3 4.0 3.0 3.0

How do I drop columns instead of rows?

Use the axis=... argument, it can be axis=0 or axis=1.

Tells the function whether you want to drop rows (axis=0) or drop columns (axis=1).

df.dropna()

A B C

3 4.0 3.0 3.0

# All columns have rows, so the result is empty.

df.dropna(axis=1)

Empty DataFrame

Columns: []

Index: [0, 1, 2, 3]

# Here's a different example requiring the column to have all NaN rows

# to be dropped. In this case no columns satisfy the condition.

df.dropna(axis=1, how='all')

A B C

0 NaN NaN NaN

1 2.0 NaN NaN

2 3.0 2.0 NaN

3 4.0 3.0 3.0

# Here's a different example requiring a column to have at least 2 NON-NULL

# values. Column C has less than 2 NON-NULL values, so it should be dropped.

df.dropna(axis=1, thresh=2)

A B

0 NaN NaN

1 2.0 NaN

2 3.0 2.0

3 4.0 3.0

I tried all of the options above but my DataFrame just won't update!

dropna, like most other functions in the pandas API returns a new DataFrame (a copy of the original with changes) as the result, so you should assign it back if you want to see changes.

df.dropna(...) # wrong

df.dropna(..., inplace=True) # right, but not recommended

df = df.dropna(...) # right

Reference

https://pandas.pydata.org/pandas-docs/stable/reference/api/pandas.DataFrame.dropna.html

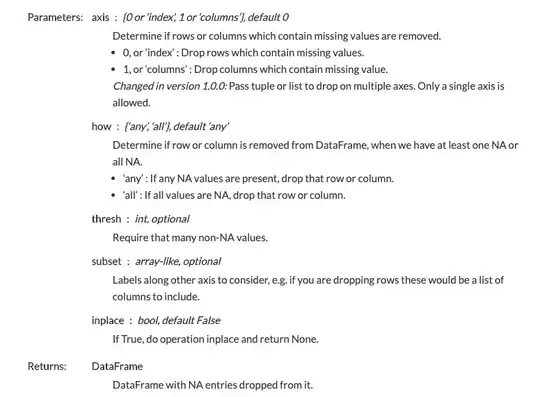

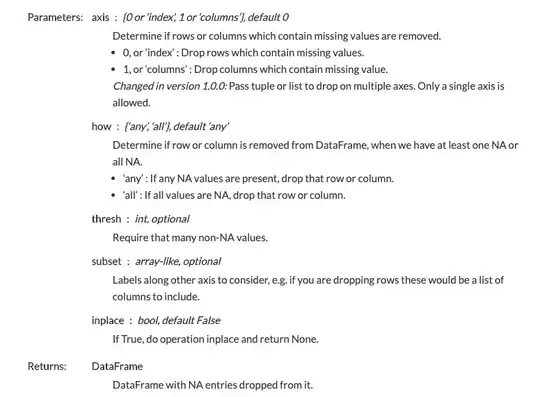

DataFrame.dropna(

self, axis=0, how='any', thresh=None, subset=None, inplace=False)