How to iterate over rows in a DataFrame in Pandas

Answer: DON'T*!

Iteration in Pandas is an anti-pattern and is something you should only do when you have exhausted every other option. You should not use any function with "iter" in its name for more than a few thousand rows or you will have to get used to a lot of waiting.

Do you want to print a DataFrame? Use DataFrame.to_string().

Do you want to compute something? In that case, search for methods in this order (list modified from here):

- Vectorization

- Cython routines

- List Comprehensions (vanilla

for loop)

DataFrame.apply(): i) Reductions that can be performed in Cython, ii) Iteration in Python spaceitems() iteritems()DataFrame.itertuples()DataFrame.iterrows()

iterrows and itertuples (both receiving many votes in answers to this question) should be used in very rare circumstances, such as generating row objects/nametuples for sequential processing, which is really the only thing these functions are useful for.

Appeal to Authority

The documentation page on iteration has a huge red warning box that says:

Iterating through pandas objects is generally slow. In many cases, iterating manually over the rows is not needed [...].

* It's actually a little more complicated than "don't". df.iterrows() is the correct answer to this question, but "vectorize your ops" is the better one. I will concede that there are circumstances where iteration cannot be avoided (for example, some operations where the result depends on the value computed for the previous row). However, it takes some familiarity with the library to know when. If you're not sure whether you need an iterative solution, you probably don't. PS: To know more about my rationale for writing this answer, skip to the very bottom.

A good number of basic operations and computations are "vectorised" by pandas (either through NumPy, or through Cythonized functions). This includes arithmetic, comparisons, (most) reductions, reshaping (such as pivoting), joins, and groupby operations. Look through the documentation on Essential Basic Functionality to find a suitable vectorised method for your problem.

If none exists, feel free to write your own using custom Cython extensions.

List comprehensions should be your next port of call if 1) there is no vectorized solution available, 2) performance is important, but not important enough to go through the hassle of cythonizing your code, and 3) you're trying to perform elementwise transformation on your code. There is a good amount of evidence to suggest that list comprehensions are sufficiently fast (and even sometimes faster) for many common Pandas tasks.

The formula is simple,

# Iterating over one column - `f` is some function that processes your data

result = [f(x) for x in df['col']]

# Iterating over two columns, use `zip`

result = [f(x, y) for x, y in zip(df['col1'], df['col2'])]

# Iterating over multiple columns - same data type

result = [f(row[0], ..., row[n]) for row in df[['col1', ...,'coln']].to_numpy()]

# Iterating over multiple columns - differing data type

result = [f(row[0], ..., row[n]) for row in zip(df['col1'], ..., df['coln'])]

If you can encapsulate your business logic into a function, you can use a list comprehension that calls it. You can make arbitrarily complex things work through the simplicity and speed of raw Python code.

Caveats

List comprehensions assume that your data is easy to work with - what that means is your data types are consistent and you don't have NaNs, but this cannot always be guaranteed.

- The first one is more obvious, but when dealing with NaNs, prefer in-built pandas methods if they exist (because they have much better corner-case handling logic), or ensure your business logic includes appropriate NaN handling logic.

- When dealing with mixed data types you should iterate over

zip(df['A'], df['B'], ...) instead of df[['A', 'B']].to_numpy() as the latter implicitly upcasts data to the most common type. As an example if A is numeric and B is string, to_numpy() will cast the entire array to string, which may not be what you want. Fortunately zipping your columns together is the most straightforward workaround to this.

*Your mileage may vary for the reasons outlined in the Caveats section above.

An Obvious Example

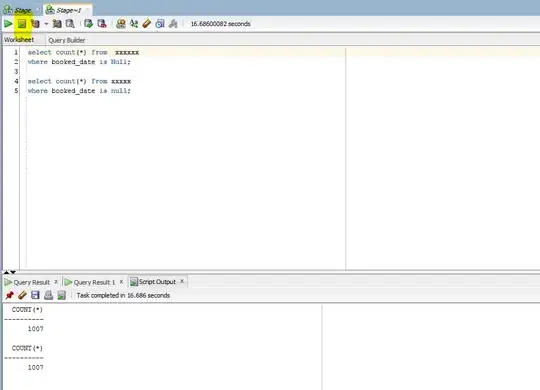

Let's demonstrate the difference with a simple example of adding two pandas columns A + B. This is a vectorizable operation, so it will be easy to contrast the performance of the methods discussed above.

Benchmarking code, for your reference. The line at the bottom measures a function written in numpandas, a style of Pandas that mixes heavily with NumPy to squeeze out maximum performance. Writing numpandas code should be avoided unless you know what you're doing. Stick to the API where you can (i.e., prefer vec over vec_numpy).

I should mention, however, that it isn't always this cut and dry. Sometimes the answer to "what is the best method for an operation" is "it depends on your data". My advice is to test out different approaches on your data before settling on one.

My Personal Opinion *

Most of the analyses performed on the various alternatives to the iter family has been through the lens of performance. However, in most situations you will typically be working on a reasonably sized dataset (nothing beyond a few thousand or 100K rows) and performance will come second to simplicity/readability of the solution.

Here is my personal preference when selecting a method to use for a problem.

For the novice:

Vectorization (when possible); apply(); List Comprehensions; itertuples()/iteritems(); iterrows(); Cython

For the more experienced:

Vectorization (when possible); apply(); List Comprehensions; Cython; itertuples()/iteritems(); iterrows()

Vectorization prevails as the most idiomatic method for any problem that can be vectorized. Always seek to vectorize! When in doubt, consult the docs, or look on Stack Overflow for an existing question on your particular task.

I do tend to go on about how bad apply is in a lot of my posts, but I do concede it is easier for a beginner to wrap their head around what it's doing. Additionally, there are quite a few use cases for apply has explained in this post of mine.

Cython ranks lower down on the list because it takes more time and effort to pull off correctly. You will usually never need to write code with pandas that demands this level of performance that even a list comprehension cannot satisfy.

* As with any personal opinion, please take with heaps of salt!

Further Reading

* Pandas string methods are "vectorized" in the sense that they are specified on the series but operate on each element. The underlying mechanisms are still iterative, because string operations are inherently hard to vectorize.

Why I Wrote this Answer

A common trend I notice from new users is to ask questions of the form "How can I iterate over my df to do X?". Showing code that calls iterrows() while doing something inside a for loop. Here is why. A new user to the library who has not been introduced to the concept of vectorization will likely envision the code that solves their problem as iterating over their data to do something. Not knowing how to iterate over a DataFrame, the first thing they do is Google it and end up here, at this question. They then see the accepted answer telling them how to, and they close their eyes and run this code without ever first questioning if iteration is the right thing to do.

The aim of this answer is to help new users understand that iteration is not necessarily the solution to every problem, and that better, faster and more idiomatic solutions could exist, and that it is worth investing time in exploring them. I'm not trying to start a war of iteration vs. vectorization, but I want new users to be informed when developing solutions to their problems with this library.

And finally ... a TLDR to summarize this post